Strengthening Public Sector AI Frameworks in the Wake of APRA’s Warning on Governance Gaps

The Australian Prudential Regulation Authority (APRA) recently issued a stern directive to the leadership of the nation’s largest banking and insurance institutions, warning that the rapid adoption of artificial intelligence is outstripping the development of essential governance frameworks. While the letter was specifically addressed to the financial services sector, its implications are reverberating through the halls of government agencies and public sector departments. The core message from the regulator was unambiguous: while innovation is moving at an unprecedented pace, the oversight mechanisms intended to manage risk are lagging dangerously behind, creating a "governance gap" that could lead to systemic failures.

For government agencies, this warning serves as a critical bellwether. While a failure in a private financial institution might result in significant fines, regulatory sanctions, or reputational damage, a failure in the public sector carries even weightier consequences. In the context of government, poorly governed AI rollouts threaten to compromise the privacy of millions of citizens, erode public trust in democratic institutions, and disrupt the delivery of essential human services. As government departments increasingly look to automate decision-making and service delivery, the vulnerabilities identified by APRA—including a lack of technical literacy among senior leaders, outdated security protocols, and opaque supply chains—represent a clear and present danger to the public interest.

The Chronology of AI Regulation and the APRA Intervention

The current sense of urgency stems from a rapid acceleration in AI deployment that began in late 2022. The timeline of these developments highlights how quickly the technology has moved from a niche interest to a central pillar of organizational strategy.

In November 2022, the public release of generative AI tools like ChatGPT sparked a global rush toward large language model (LLM) integration. By early 2023, Australian government agencies and private enterprises began exploring use cases ranging from automated customer service to internal data synthesis. However, by mid-2023, the Australian Government’s Department of Industry, Science and Resources released a consultation paper titled "Safe and Responsible AI in Australia," acknowledging that existing regulatory frameworks might be insufficient to manage the unique risks of generative models.

Throughout late 2023 and early 2024, APRA conducted a series of deep-dive reviews into how the financial sector was managing emerging technologies. These reviews culminated in the May 2024 letter to executives, which criticized the "fragmented assurance" models currently in place. This intervention marks a shift from a permissive "wait-and-see" approach to a more assertive regulatory stance, signaling that the honeymoon period for unregulated AI experimentation has come to an end.

The Governance Gap: Identifying Systemic Vulnerabilities

The vulnerabilities cited by APRA are not unique to the banking sector; they are systemic issues that plague many large-scale IT environments. One of the most significant risks identified is the rise of "Shadow AI." Much like the "Shadow IT" of the previous decade, where employees used unauthorized software to bypass rigid corporate systems, Shadow AI involves developers or departments deploying AI tools without the knowledge or approval of centralized IT and risk departments.

In a government context, Shadow AI is particularly hazardous. If a department uses an unvetted AI tool to process sensitive citizen data or to assist in policy drafting, they may be inadvertently feeding private information into third-party models or producing biased outcomes that violate anti-discrimination laws. APRA’s critique highlighted that many organizations are still relying on "point-in-time" audits—static assessments conducted at the beginning of a project—which fail to account for the dynamic nature of AI. Unlike traditional software, AI models can "drift," meaning their performance and accuracy change over time as they interact with new data.

Transitioning to Governance as Code

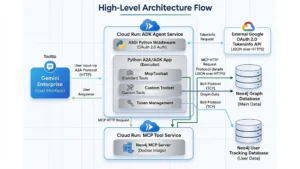

To address these vulnerabilities, experts argue that both the private and public sectors must move away from treating governance as a bureaucratic "paperwork exercise" and instead treat it as an engineering requirement. The concept of "Governance as Code" is emerging as the gold standard for responsible AI deployment.

In this model, compliance checks are not manual tasks performed by human auditors after a system is built; rather, they are hardwired into the deployment pipeline, often referred to as the Continuous Integration/Continuous Deployment (CI/CD) pipeline. By integrating automated gatekeepers, an organization can ensure that any AI tool being pushed to a live environment automatically undergoes a battery of tests. These tests can verify whether the model touches Personally Identifiable Information (PII), whether it has passed the latest bias and fairness checks, and whether its security protocols meet current standards. If the system detects a failure in any of these areas, the deployment is automatically blocked. This "governed by default" approach removes the human error associated with manual risk assessments and ensures that safety is built into the software’s DNA.

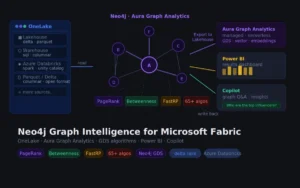

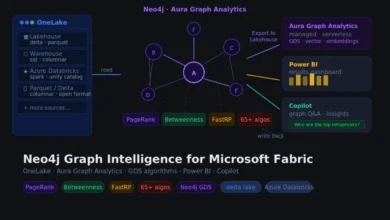

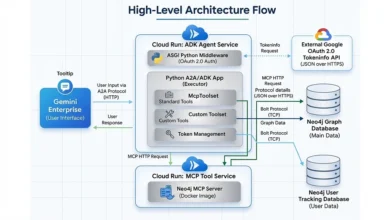

Mapping the AI Supply Chain with Graph Technology

A significant portion of APRA’s warning focused on the risks associated with third-party foundation models. Most organizations do not build their own AI from scratch; they rely on models provided by vendors like OpenAI, Google, or Anthropic. This creates a complex and often opaque supply chain where a single vulnerability in a vendor’s model can have a cascading effect across dozens of internal applications.

Traditional methods of tracking these dependencies, such as spreadsheets or relational databases, are increasingly viewed as inadequate for the task. This is where graph databases, such as Neo4j, are becoming essential for modern governance. Graph technology allows organizations to create a dynamic, interconnected map of their entire AI ecosystem. By visualizing the lineage of every tool, a government agency can instantly identify which public-facing services are reliant on a specific external model.

If a vendor announces a security flaw or a significant bias issue in a specific version of their language model, a graph-based system allows the agency to perform an immediate impact analysis. Instead of spending weeks auditing their systems to find where that model is used, they can see the direct line from the compromised model to the impacted service in seconds. This capability allows for the immediate triggering of "fallbacks"—switching to a secondary, safe model or a manual process—thereby maintaining service continuity and protecting citizen data.

From Static Audits to Continuous Observability

The "fragmented assurance" mentioned by APRA refers to the lack of ongoing monitoring. Traditional IT systems are relatively static; once they are tested and deployed, their behavior remains predictable. AI models, however, are probabilistic and non-deterministic. They learn, they adapt, and occasionally, they degrade.

Continuous observability is the necessary antidote to this unpredictability. A robust public sector AI platform requires "AI firewalls" that actively monitor the inputs and outputs of models in real-time. These firewalls are designed to detect "prompt injection" attacks—where malicious actors attempt to trick the AI into revealing sensitive information or bypassing safety filters. Furthermore, monitoring systems must be calibrated to detect "model drift." If an algorithm that was unbiased at the start of the year begins to show skewed outputs in its processing of housing applications or social service eligibility, the system should alert operations teams immediately, rather than waiting for the next annual audit to discover the error.

Enhancing Executive Literacy and Operational Visibility

Perhaps the most difficult hurdle identified by regulators is the gap in technical literacy at the executive level. Department secretaries and risk committees are ultimately responsible for the legal and ethical implications of AI, yet they often lack the technical background to interpret raw machine learning logs or complex data architectures.

To bridge this gap, AI platforms must be capable of translating technical telemetry into plain-English operational risk. Responsible AI governance requires dashboards that provide leaders with clear, actionable insights. Rather than presenting jargon-heavy reports, these dashboards should answer fundamental questions: What percentage of high-risk systems have passed a fairness audit in the last 30 days? Which third-party vendors represent the greatest concentration of risk? Is there a backup plan in place for every critical AI-dependent service?

When leaders have this level of visibility, the perception of governance changes. It is no longer seen as a bottleneck that slows down innovation, but as a framework that enables it. When guardrails are automated and visibility is high, developers can iterate faster because they have the confidence that the system will prevent them from accidentally violating laws or ethical standards.

Broader Implications and the Path Forward

The warning from APRA should be viewed as a catalyst for a broader rethink of digital infrastructure in the public sector. As the Australian government moves toward its goal of becoming a leading digital economy by 2030, the safe deployment of AI will be a cornerstone of that ambition.

The implications of failing to act on these governance warnings are significant. Beyond the immediate risks of data breaches or biased decision-making, there is the long-term risk of a "tech backlash." If high-profile AI failures occur in the public sector, it could lead to a loss of public confidence that stalls the adoption of beneficial technologies for years to come. Conversely, by adopting the rigorous standards suggested by APRA—moving toward automated governance, supply chain transparency, and continuous monitoring—government agencies can set a global benchmark for the responsible use of AI.

In conclusion, the path to safe AI in the public sector requires a fundamental shift in perspective. Governance must be elevated from a secondary administrative task to a primary engineering and leadership priority. By treating the challenges of AI governance as technical problems that can be solved with modern tools and methodologies, the public sector can ensure that it delivers on the promise of AI while steadfastly protecting the citizens it serves. The APRA letter may have been addressed to the banks, but the lesson it contains is universal: in the age of AI, speed without control is a recipe for disaster.