We Gave Agents IDE-Native Search Tools. They Got Faster and Cheaper.

JetBrains, a leading developer of intelligent programming tools, has announced a significant advancement in the efficiency and cost-effectiveness of AI-powered coding agents by integrating IDE-native search capabilities. This innovation, detailed in a recent update, demonstrates that by equipping these agents with sophisticated, context-aware search tools akin to those used by human developers within an Integrated Development Environment (IDE), they can perform coding tasks with greater speed and reduced operational costs, all while maintaining the quality of output. This development marks a pivotal step in refining the practical application of artificial intelligence in software development, addressing previous limitations associated with agents relying on less intelligent, general-purpose command-line utilities.

The Evolution of AI in Software Development and the Search Challenge

The proliferation of AI, particularly large language models (LLMs), has rapidly transformed various industries, and software development is no exception. Coding agents, designed to assist or even automate portions of the development process, represent a frontier in this evolution. These agents, when tasked with understanding, navigating, and modifying complex codebases, frequently need to search for specific functions, definitions, file paths, or patterns. Historically, these AI agents have defaulted to traditional shell-based tools such as grep and find. While ubiquitous and reliable for basic text pattern matching, these tools possess inherent limitations when applied to the nuanced world of software code.

The fundamental drawback of grep and find is their "blindness" to the semantic and structural context of a project. They operate primarily on raw text, unaware of programming language syntax, symbol boundaries, class hierarchies, or module dependencies. For an AI agent attempting to understand a codebase, this lack of context means sifting through voluminous, often irrelevant, output. This process necessitates multiple follow-up queries, increased token consumption—the operational unit for LLMs—and consequently, higher computational costs and longer task completion times. An agent might spend valuable tokens parsing through hundreds of lines of grep output to locate a specific function definition that an IDE could pinpoint instantly, understanding its scope and relationships within the project. JetBrains recognized this critical bottleneck, prompting their investigation into a more intelligent search paradigm for AI agents.

The JetBrains Innovation: IDE-Native Search for Agents

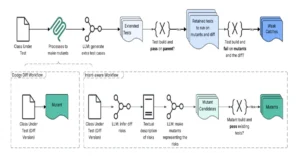

The core of JetBrains’ breakthrough lies in a seemingly obvious yet profoundly impactful idea: empowering AI agents to leverage the same intelligent search capabilities available within their professional IDEs. To achieve this, JetBrains engineered a "prebundled skill" that pairs a search prompt with a unified Multi-platform Common Protocol (MCP) tool. This single, versatile tool encompasses four distinct search modes: file search, text search, regular expression search, and symbol lookup. A universal router intelligently dispatches calls to the appropriate backend, ensuring the agent uses the most effective method for its query.

Unlike rudimentary text searches, IDE-native search tools possess a deep understanding of project structure, language semantics, and symbol definitions. They are designed to parse and index code, creating a rich internal model of the codebase. When an AI agent utilizes this IDE-native search, it gains access to this sophisticated understanding. For instance, a "symbol lookup" isn’t just finding a string; it’s identifying a variable, function, or class definition within its correct scope, distinguishing between identically named elements in different contexts. This semantic awareness dramatically reduces the "noise" an agent must process, allowing it to retrieve precise information directly, without the need for extensive post-processing or iterative refinement of search queries. The integration effectively transforms the agent’s interaction with the codebase from a brute-force text scan into an intelligent, context-aware query, mirroring the efficiency of an experienced human developer using an IDE’s powerful features.

Rigorous Methodology: Quantifying the Impact

To validate their hypothesis and precisely measure the impact of this new tooling, JetBrains established a robust evaluation pipeline. This pipeline involved spinning up an MCP server alongside a standard IDE, granting the agent access to the newly configured tools and skills. The methodology hinged on a paired delta analysis, comparing identical coding tasks performed both with and without the prebundled IDE-native search tooling. This rigorous approach ensured that any observed changes could be directly attributed to the new search capabilities.

Four critical metrics were meticulously tracked:

- Quality: The primary objective metric, assessing whether all tests associated with the coding task passed, ensuring that efficiency gains did not compromise correctness.

- Latency: Measuring the median and P95 (95th percentile) task completion time, directly reflecting the agent’s speed and responsiveness.

- Cost: Converting token consumption—a direct proxy for LLM operational expenses—into monetary value, providing a tangible measure of economic efficiency.

- Budget Discipline: Tracking the frequency with which a single task exceeded a predefined budget cap (USD 0.50), highlighting the consistency of cost management.

Crucially, JetBrains committed to reporting improvement deltas only when they surpassed a strict statistical significance threshold: p < 0.05, employing a paired test with 95% confidence intervals. Metrics that did not exhibit a statistically significant change were either explicitly noted or omitted from the results, preventing misleading conclusions. The team also explored four distinct configuration variants of the tooling, iteratively refining and selecting the one that offered the optimal balance between latency reduction and cost savings. This chosen configuration was then re-tested across different models and programming languages to confirm the generalizability and robustness of the findings. This systematic and statistically sound approach underpins the credibility of JetBrains’ claims.

Key Findings: A Paradigm Shift in Agent Efficiency

The results of JetBrains’ extensive evaluation unequivocally demonstrated the transformative potential of IDE-native search for coding agents. The selected configuration—a prebundled search skill complemented by a unified IDE-native tool and a universal router—yielded significant improvements across key performance indicators.

The most striking outcomes were:

- Reduced Latency: Agents equipped with the IDE-native search tools completed tasks demonstrably faster. While specific percentage reductions were not universally disclosed for all scenarios, the consistent trend across tests indicated a notable acceleration in task completion. In a development environment, faster agent responses translate directly into increased developer productivity, reducing waiting times and enabling quicker iteration cycles. This speed enhancement is crucial for maintaining flow state for human developers collaborating with AI.

- Lower Operational Costs: The intelligent search capabilities led to a measurable decrease in token consumption. By providing precise, context-rich information, agents required fewer conversational turns and less computational effort to sift through irrelevant data, thereby consuming fewer tokens. This reduction in token usage directly translates into lower operational costs, making AI-assisted development more economically viable, particularly for large-scale projects or organizations running numerous AI agent tasks.

- Maintained Quality: Crucially, these efficiency gains did not come at the expense of output quality. The evaluation confirmed that there was no statistically significant change in the quality of the code produced or the success rate of test cases. This finding is paramount, as any acceleration or cost reduction would be moot if it led to a degradation in the reliability or correctness of the generated code. It underscores that the agents were not merely faster, but smarter in their approach to problem-solving.

The iterative configuration exploration phase proved vital in optimizing the solution. By systematically testing four different tool configurations, JetBrains was able to pinpoint the ideal balance, visualizing this as targeting the "lower-left corner" of a plot representing latency and total cost. This methodical approach ensures that the final product offers the most impactful benefits to users.

Cross-Model and Cross-Language Validation: Demonstrating Robustness

To further validate the robustness and generalizability of their innovation, JetBrains extended their experiments to different LLMs and programming languages. Re-running the experiment with GPT 5.4 on both Java and Kotlin codebases yielded consistent results, reaffirming the pattern of reduced latency and cost. Notably, Kotlin projects experienced the most significant cost improvement, with total operational costs falling by an impressive 13.48%. This specific data point highlights the tangible economic benefits of the IDE-native search integration, particularly for modern, complex programming languages.

An interesting divergence emerged when comparing the adoption rates of the new tool across different LLMs. Codex, an AI model often used for code generation, channeled a remarkable 91% of its search calls through the new IDE-native tool. This high adoption rate suggests that Codex, which might have less sophisticated internal code search mechanisms, readily embraced the superior external tool when available. Conversely, Claude models exhibited different behavior: Claude Opus utilized the new tool for approximately half of its searches, while Claude Haiku only used it for 28%, preferring its existing grep and find methods.

This differential adoption provides crucial insight into the varying capabilities of LLMs. Claude models are known for their strong built-in reasoning and context understanding, which might extend to their internal code search capabilities. Therefore, they leaned on their pre-existing strengths, finding less incremental benefit from the new external tool. The takeaway is clear: prebundled tooling effectively fills capability gaps. Where an AI model’s internal search is less robust, the IDE-native tool provides a substantial upgrade, making a significant difference. Where a model already possesses strong internal search capabilities, the external tool still adds value, but the incremental improvement is naturally less pronounced. This nuanced understanding informs future development and optimization strategies for integrating AI tools.

Broader Implications and Industry Impact

JetBrains’ innovation extends beyond mere technical enhancement; it carries significant implications for the broader landscape of AI-powered software development. This development underscores a crucial shift from simply augmenting AI agents with generic command-line tools to deeply embedding them within the semantic understanding of a professional development environment.

- Elevated Developer Productivity: By making coding agents faster and more accurate in their code navigation, developers can offload more complex search and analysis tasks, freeing up cognitive load for higher-level problem-solving and creative design. This directly contributes to increased developer productivity and satisfaction.

- Economic Efficiency for Enterprises: The demonstrated cost savings, particularly the 13.48% reduction for Kotlin projects, present a compelling economic argument for adopting such integrated AI tools. For enterprises deploying AI agents across large teams or extensive codebases, these savings can accumulate rapidly, making AI-assisted development more financially sustainable and scalable.

- Setting a New Industry Benchmark: JetBrains, a long-standing leader in developer tools, is setting a new benchmark for how AI agents should interact with code. This move is likely to spur other tool vendors and AI platform providers to pursue similar deep integrations, pushing the entire industry towards more intelligent and efficient AI-developer collaboration. Industry observers suggest this enhancement could significantly impact the adoption trajectory of AI agents, making them more reliable and cost-effective partners in the software development lifecycle.

- Advancing AI’s Understanding of Code: This development highlights the growing trend of integrating AI capabilities directly into core development environments. It pushes AI agents beyond superficial text manipulation, enabling them to engage with code on a more semantically aware level, akin to human understanding. This is a crucial step towards truly intelligent coding assistants capable of complex reasoning and code manipulation.

The Road Ahead: Future Enhancements and Rollout

With a validated evaluation pipeline and compelling initial results, JetBrains is now accelerating its efforts to expand and deploy this technology. The immediate next steps include:

- Testing on Smaller Models: The team’s current hunch is that smaller, less capable LLMs will benefit even more from the IDE-native search tools, as they inherently possess fewer built-in search capabilities to fall back on. This expansion aims to democratize access to efficient AI-assisted coding, making it viable for a broader range of models.

- Expanding Language Support: While the current results are strongest for Java and Kotlin, JetBrains plans to expand the evaluation to other popular languages, including Python, .NET, and TypeScript, with larger sample sizes to ensure comprehensive coverage and optimization.

- Integration with IntelliJ IDEA MCP Server: The winning configuration is currently being prepared for integration into the IntelliJ IDEA MCP Server. This will enable agent sessions running within IntelliJ IDEA to seamlessly leverage IDE-native tooling when the server is activated. This integration is a crucial step towards making the feature broadly available within JetBrains’ flagship IDE.

- Default Rollout in AI Assistant Plugin: The ultimate goal is to enable this feature by default in upcoming updates to the JetBrains AI Assistant plugin. This strategic move ensures that all users of the AI Assistant will automatically benefit from faster, cheaper, and more intelligent coding agents without requiring manual configuration.

JetBrains continues its commitment to supercharging developer tools with AI-powered features, as highlighted on their AI blog. This latest innovation is a testament to their vision of creating intelligent environments that not only assist but genuinely empower developers. For those eager to experience these advancements prior to the default rollout, specific instructions for early access will be provided by JetBrains, allowing proactive developers to integrate these cutting-edge capabilities into their workflows sooner. This methodical approach, from research and rigorous testing to phased rollout, underscores JetBrains’ dedication to delivering practical, impactful AI solutions for the global developer community.