The Exactly as Designed. The Answer Was Still Wrong.

A critical vulnerability in Retrieval-Augmented Generation (RAG) systems is silently undermining the reliability of AI-powered information systems, leading to confidently incorrect answers despite robust retrieval mechanisms. This "hidden blind spot" occurs not in the retrieval phase, which is often meticulously optimized, but in the less-evaluated context assembly stage, where Large Language Models (LLMs) are fed conflicting information without a mechanism to detect or resolve it. This flaw can result in serious consequences, from misreported financial figures to outdated policy advice and incorrect technical specifications, all delivered with an unsettling degree of AI confidence.

The Hidden Flaw in RAG Pipelines

The issue came sharply into focus during a routine query against a meticulously constructed knowledge base. The system, featuring advanced chunking, hybrid search, and reranking, returned top-k documents with high cosine similarities, signaling a perfectly functioning retrieval pipeline. The subsequent QA model, powered by these documents, generated a confident answer. Yet, the answer was factually incorrect. The problem was not a hallucination, nor a retrieval failure; the correct documents were indeed retrieved. Both of them. One contained a preliminary earnings figure, and the other, an audited revision that superseded it. The model, lacking the inherent ability to referee this dispute, simply chose one and reported it, unaware of the underlying contradiction.

This failure mode is particularly insidious because it eludes conventional evaluation metrics. Retrieval metrics register success because the relevant documents are found. Hallucination detectors remain silent because the information is present in the provided context, even if it’s contradictory. The flaw resides squarely in the gap between context assembly and generation—a crucial step in the RAG pipeline that remains largely unevaluated in many deployments.

To isolate and demonstrate this vulnerability, a reproducible experiment was developed. This lightweight setup, requiring only 220 MB of memory and running on a CPU without any API keys or cloud infrastructure, clearly illustrates the problem. The core question posed by the experiment is not whether retrieval works (it demonstrably does), but rather, what an LLM does when presented with a contradictory brief and asked to respond with certainty. The answer, alarmingly, is that it silently and confidently "picks a side" without indicating that a choice was even made.

Case Studies: Real-World Scenarios of Conflicting Data

The experiment employed three distinct scenarios, each drawn from common production environments where knowledge bases naturally accumulate conflicting information over time:

-

Scenario A: The Unnoticed Financial Restatement. A company’s Q4 earnings release for fiscal year 2023 initially reported annual revenue of $4.2 million. Three months later, external auditors restated this figure to $6.8 million. Both the initial earnings release (dated January 15, 2024) and the revised annual report (dated April 3, 2024) were present in the knowledge base and retrieved for the query "What was Acme Corp’s annual revenue for fiscal year 2023?" The naive RAG system, receiving both documents (similarity scores 0.863 and 0.820 respectively), confidently answered "$4.2M." The preliminary, outdated figure was chosen, offering no indication that a more authoritative, recent source existed.

-

Scenario B: The Superseded HR Policy. An HR policy from June 2023 mandated three days per week in-office. A subsequent revision in November 2023 explicitly reversed this, permitting fully remote work. When an employee inquired about the current remote work policy, both the June (similarity 0.806) and November (similarity 0.776) policies were retrieved. The RAG system, again without conflict resolution, presented the older, stricter June policy, stating "all employees are required to be present in the office a minimum of 3 days per week," with 78.3% confidence.

-

Scenario C: The Undeprecated API Documentation. Version 1.2 of an API reference specified a rate limit of 100 requests per minute. Following an infrastructure upgrade, Version 2.0, published later, increased this to 500 requests per minute. Both documents were retrieved (similarity scores 0.788 and 0.732). The RAG system answered "100 requests per minute" with 81.0% confidence. A developer relying on this information would unknowingly throttle their application to one-fifth of its actual capacity.

In all three instances, the naive RAG system retrieved the relevant documents but produced incorrect, outdated, or suboptimal answers, consistently displaying high confidence scores (ranging from 78% to 81%). This underscores the core problem: the confidence score of the LLM itself is not a reliable indicator of factual accuracy when conflicting information is present in the context.

Why LLMs Fail to Discern Truth from Contradiction

The model’s behavior, while problematic for accuracy, is not a design flaw in the LLM itself. Extractive QA models, such as deepset/minilm-uncased-squad2 used in the experiment, are trained to select the most probable answer span from a given context based on token-level scores. They are not inherently equipped with mechanisms to weigh source authority, recency, or to identify and resolve contradictions. When presented with two conflicting pieces of information, the model simply selects the span that achieves the highest combined start-logit and end-logit score.

Several factors contribute to this selection bias:

- Position Bias: Spans appearing earlier in the concatenated context string often receive marginally higher attention scores due to the encoder architecture. In the scenarios presented, the document that ranked marginally higher in retrieval—and thus appeared first—often contained the outdated information.

- Language Strength: Direct, declarative statements (e.g., "revenue of $4.2M") tend to outscore more nuanced or hedged phrasing (e.g., "following restatement… is $6.8M"). The clarity and directness of the language, rather than its truthfulness or recency, can influence the model’s selection.

- Lexical Alignment: Spans whose vocabulary more closely overlaps with the query tokens may score higher, irrespective of whether the underlying claim is current or authoritative.

Crucially, the model’s decision-making process completely ignores metadata such as source date, document authority, audit status, or whether one claim supersedes another. These vital signals, which a human would instinctively use to resolve conflicts, are invisible to the extractive model. Research by Joren et al. (2025) at ICLR 2025 further corroborates this, demonstrating that frontier models like Gemini 1.5 Pro, GPT-4o, and Claude 3.5 frequently produce incorrect answers instead of abstaining when context is insufficient or contradictory, without reflecting this uncertainty in their expressed confidence. This highlights a fundamental architectural gap: the RAG pipeline lacks a dedicated stage for contradiction detection and resolution before context is passed to the generation model.

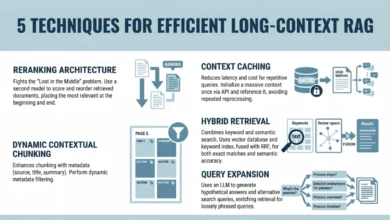

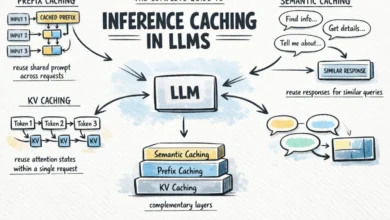

Building the Conflict Detection Layer

Addressing this architectural gap necessitates an explicit conflict detection layer positioned between the retrieval and generation phases. This layer examines every pair of retrieved documents to flag contradictions before the QA model processes the context. To maintain efficiency, embeddings for all retrieved documents are computed in a single batched forward pass, ensuring each document is encoded only once, regardless of how many pairs it participates in.

The experiment’s ConflictDetector employs two primary heuristics:

-

Numerical Contradiction: This heuristic flags two topic-similar documents that contain non-overlapping "meaningful numbers." To prevent false positives, it filters out common, less significant numbers like years (1900–2099) and bare small integers (1–9) that appear ubiquitously in enterprise texts. For instance, in Scenario A,

fin-001contains ‘$4.2M’ andfin-002contains ‘$6.8M’. With no intersection, a numerical conflict is detected. -

Contradiction Signal Asymmetry: This heuristic identifies documents discussing the same topic where one contains explicit contradiction tokens (e.g., "no longer," "revised," "outdated," "superseded," "increase," "decrease") that the other does not. The token set is categorized to allow for domain-specific tuning (e.g., a legal corpus might require a broader negation set). In Scenario B,

hr-002contains "no longer required," signaling a change absent inhr-001. Similarly, in Scenario C,api-002contains "increased," which is not inapi-001. Asymmetry in these signals triggers a conflict alert.

Both heuristics are gated by a topic_sim threshold (0.68 in this experiment, calibrated for all-MiniLM-L6-v2) to prevent flagging conflicts between semantically unrelated documents that coincidentally share a number or a negation word. This threshold requires recalibration for different embedding models and domains.

Resolution Strategy: Cluster-Aware Recency

Once conflicts are detected, the pipeline implements a resolution strategy. For temporal conflicts, such as versioned documents or policy updates, the most recently timestamped document from each conflict cluster is retained. The "cluster-aware" aspect is crucial: a single top-k retrieval might yield multiple independent conflict clusters (e.g., financial data conflicts and API documentation conflicts). A naive approach that simply picks the single most recent document overall would inadvertently discard correct, updated information from other clusters.

Instead, the implementation constructs a conflict graph, identifies connected components using iterative Depth-First Search (DFS), and resolves each component independently. Non-conflicting documents pass through unaffected, while each conflict cluster contributes exactly one winner – the most recent document.

Demonstrated Success: Conflict-Aware RAG in Action

With the conflict detection and resolution layer activated, the experiment’s Phase 2 demonstrates a dramatic improvement in answer quality.

- Scenario A (Financial Restatement): The RAG system now correctly answers "$6.8M," explicitly noting that conflicting sources were detected and the answer was derived from the most recent document (

2023-Annual-Report-Revised, dated 2024-04-03). - Scenario B (HR Policy Update): The system accurately states, "employees are no longer required to maintain a fixed in-office schedule," having prioritized the

HR-Policy-November-2023document. - Scenario C (API Documentation): The correct API rate limit of "500 requests per minute" is provided, based on

API-Reference-v2.0.

Notably, the confidence scores for these correct answers remain largely consistent with those of the incorrect answers in Phase 1 (78-81%). This powerfully reinforces the initial observation: confidence alone is not a reliable indicator of accuracy in the face of conflicting context. The fundamental change is the architectural intervention that ensures only factually consistent and up-to-date information reaches the LLM.

Limitations and Future Directions

While effective for common enterprise scenarios, the heuristic-based conflict detection has its limitations:

- Paraphrased Conflicts: The current heuristics target numerical differences and explicit contradiction tokens. They may not catch subtle semantic contradictions expressed through paraphrasing (e.g., "the service was retired" vs. "the service is currently available"). For such cases, more sophisticated Natural Language Inference (NLI) models, which can score entailment versus contradiction between sentence pairs, offer a more robust solution, albeit with increased computational overhead (e.g.,

cross-encoder/nli-deberta-v3-smallat ~80 MB). TheConflictDetectorclass is designed for extensibility to incorporate such methods. - Non-Temporal Conflicts: Recency-based resolution is ideal for versioned data but inappropriate for other conflict types, such as disagreements in expert opinions, conflicts arising from different methodologies, or queries where multiple perspectives are desired. In these situations, the

ConflictReportdata structure provides the necessary information to craft alternative responses, such as surfacing both claims, flagging for human review, or prompting the user for clarification. - Scale: Pairwise comparison has an O(k²) complexity, where ‘k’ is the number of retrieved documents. While efficient for small k (e.g., k=3 or k=20), pipelines retrieving 100 or more documents may require pre-indexing known conflict pairs or more advanced cluster-based detection methods to maintain performance.

The research community is actively exploring more sophisticated solutions. Cattan et al. (2025) introduced the CONFLICTS benchmark to specifically evaluate how models handle knowledge conflicts, identifying categories like freshness, conflicting opinions, complementary information, and misinformation. Ye et al. (2026) proposed Transparent Conflict Resolution (TCR), a framework that disentangles semantic relevance from factual consistency using dual contrastive encoders. Gao et al. (2025) developed CLEAR (Conflict-Localized and Enhanced Attention for RAG), which probes LLM hidden states to detect where conflicting knowledge manifests, guiding models towards accurate evidence integration. The consistent finding across all this advanced research mirrors the practical demonstration: retrieval quality and answer quality are distinct dimensions, and the gap between them, largely unacknowledged, is a critical area for improvement.

Practical Recommendations for Developers and Enterprises

The findings from this experiment offer clear, actionable steps for improving the reliability of RAG systems:

- Integrate a Conflict Detection Layer: Implement a conflict detection stage immediately before the generation phase in your RAG pipeline. Even simple, lightweight heuristics like numerical contradiction and contradiction signal asymmetry can effectively catch the most common conflict patterns found in enterprise data, such as financial restatements, policy updates, and versioned documentation. The provided

ConflictDetectorclass offers a ready-made blueprint for this. - Differentiate Conflict Types: Not all conflicts are equal. Distinguish between temporal conflicts (where recency dictates the correct answer), factual disputes (which may require human arbitration), and opinion conflicts (where presenting multiple perspectives is often the best approach). A blanket resolution strategy can inadvertently introduce new failure modes.

- Log All Conflict Reports: Actively log every

ConflictReportgenerated in a production environment. This data is invaluable for understanding the frequency and nature of conflicts within your specific corpus, identifying commonly conflicting document pairs, and pinpointing query patterns that trigger these issues. This empirical data is far more actionable than any synthetic benchmark. - Surface Uncertainty When Unresolved: If a conflict cannot be definitively resolved by the system, it is crucial to communicate this uncertainty to the user. Instead of confidently presenting a potentially incorrect answer, the RAG system should clearly state the conflicting information and, if possible, explain the basis for its choice (e.g., "I found conflicting information on this topic. The June 2023 policy states X; the November 2023 update states Y. The November document is more recent."). This builds trust and empowers the user to make an informed decision.

Running the Full Demo

The complete source code for the experiment is available at: https://github.com/Emmimal/rag-conflict-demo.

To run the demo:

pip install -r requirements.txt

python rag_conflict_demo.pyOptions include --quiet to suppress model loading logs, --test to run unit tests without model downloads, and --no-color for plain terminal output. The demonstration relies on sentence-transformers>=2.7.0 (for all-MiniLM-L6-v2) and transformers>=4.40.0 (for deepset/minilm-uncased-squad2), both of which download automatically and cache locally, requiring no API keys or HuggingFace authentication. The terminal outputs presented in this article are unmodified results from a local Windows machine running Python 3.9+ in a virtual environment, fully reproducible by any reader.

The Broader Implications for AI Trust

The prevailing perception is that the retrieval problem in RAG systems is largely solved, with vector search being fast, accurate, and well-understood after years of optimization. However, this experiment reveals that the context-assembly problem remains largely unsolved and, critically, unmeasured. The gap between retrieving "correct documents" and producing a "correct answer" is significant, common, and generates confidently wrong answers without any overt signal of malfunction.

The proposed solution does not demand larger, more complex models, entirely new architectures, or extensive retraining. It requires a single, additional pipeline stage that leverages existing embeddings, incurring virtually zero marginal latency. This simple, efficient architectural enhancement, demonstrated to run in about thirty seconds on a standard laptop, can profoundly impact the trustworthiness and accuracy of RAG systems. The fundamental question for any organization deploying RAG is whether their production system includes an equivalent conflict resolution layer—and if not, the extent of the silent, confident inaccuracies it is currently producing. The integrity of AI-powered information relies on addressing this critical oversight.