Democratizing Access to Organizational Knowledge Graphs for Business Users on Google Cloud Platform

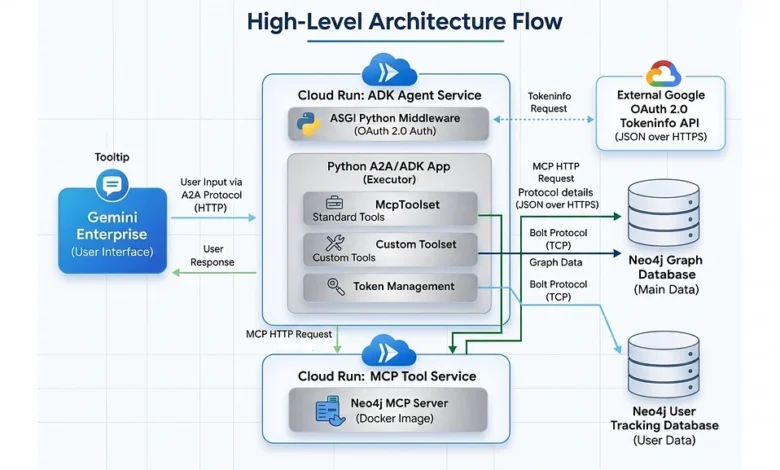

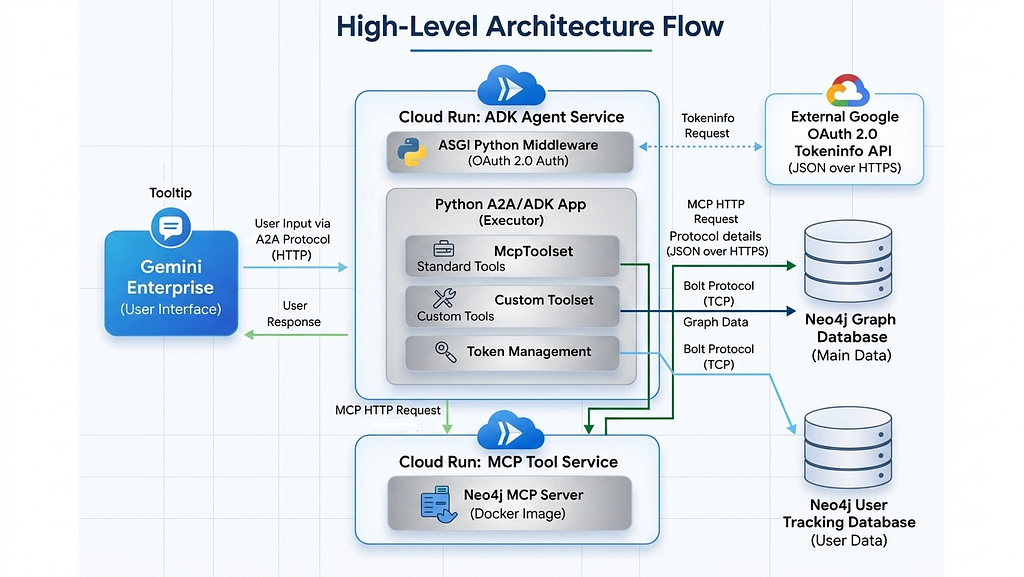

The transition from experimental generative artificial intelligence (AI) prototypes to robust, production-ready enterprise solutions represents the current frontier of corporate digital transformation. While developing a basic AI agent on a local environment has become relatively straightforward, the deployment of these systems into highly regulated, scalable, and cost-sensitive enterprise ecosystems remains a formidable technical challenge. To address this gap, Neo4j and Google Cloud have introduced a sophisticated architectural framework designed to integrate graph database capabilities directly into Google Gemini Enterprise. By utilizing the Model Context Protocol (MCP), the Google Agent Development Kit (ADK), and the Agent-to-Agent (A2A) protocol, organizations can now deploy decoupled, secure, and highly scalable AI agents that allow non-technical business users to query complex organizational knowledge graphs using natural language.

The Evolution of Enterprise Knowledge Retrieval

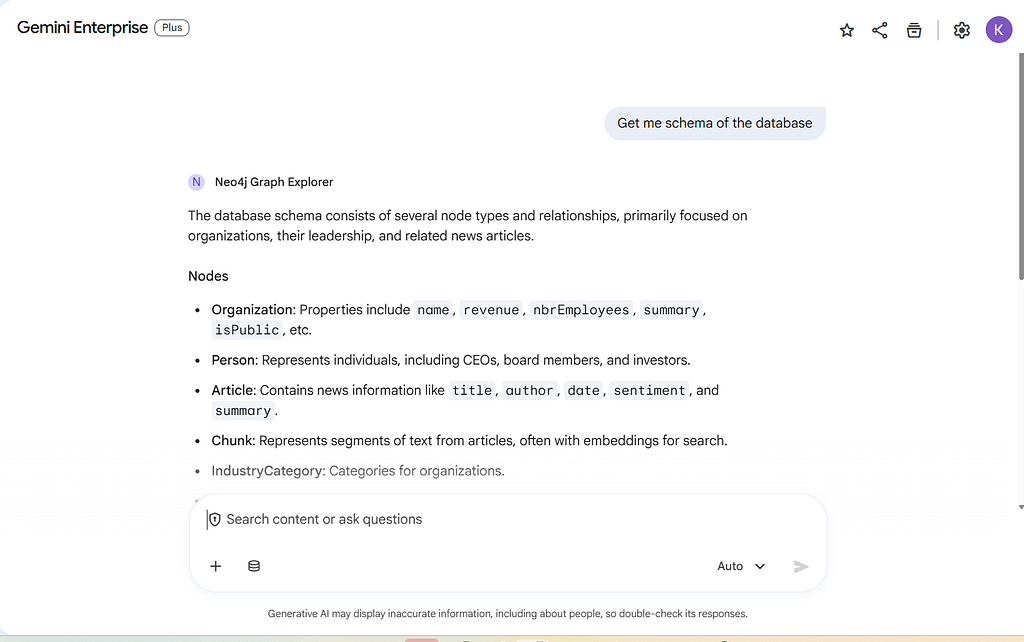

For years, enterprise data retrieval was defined by rigid SQL queries and static dashboards. The advent of Large Language Models (LLMs) promised a more intuitive interface, but early "Chat with your Data" implementations often struggled with "hallucinations" and a lack of contextual understanding regarding the relationships between data points. Knowledge Graphs (KGs), particularly those powered by Neo4j, provide the structural integrity and relational context that LLMs require to produce accurate results.

The current challenge shifted from "can we query the graph?" to "can we query the graph securely and at scale?" The integration of Neo4j with Google Gemini Enterprise via the A2A protocol marks a significant milestone in this evolution. This framework allows the Gemini interface to act as the primary user gateway while delegating specialized graph reasoning tasks to dedicated backend agents.

A Decoupled Architectural Philosophy

The core of the new Neo4j-Gemini integration lies in its microservices-based architecture. Unlike monolithic AI applications, this system separates the reasoning engine from the data execution layer. This decoupling is achieved through two primary Google Cloud Run services: one hosting the Neo4j MCP server and another hosting the Python-based Agent Development Kit (ADK) agent.

This separation of concerns offers several advantages for enterprise IT departments. First, it allows for independent scaling; if the organization sees a spike in natural language processing needs but the database load remains constant, the ADK service can scale horizontally without affecting the database connection pool. Second, it enhances security by ensuring that database credentials and execution logic are isolated within their own protected environments, communicating only via secure, authenticated HTTP protocols.

Technical Chronology: Building the Enterprise Agent

The deployment of a production-ready graph agent follows a rigorous logical progression, moving from environment orchestration to security implementation and finally to user-facing integration.

1. Orchestrating the Backend with Python ADK

The logic of the system is managed by a modular Python application that serves as the "brain" of the operation. This component utilizes the Google ADK to interface with Gemini models and the A2A protocol to communicate with the Gemini Enterprise UI.

At this stage, developers implement a specialized LlmAgent. This agent is not merely a chatbot; it is a reasoning engine equipped with a "planner" that decides whether to use standard database exploration tools or custom-coded Python functions. For instance, while the Model Context Protocol (MCP) provides general tools for schema exploration and basic querying, enterprise-specific logic—such as calculating investment returns or identifying high-risk supply chain nodes—can be injected as custom FunctionTools.

2. Implementing the Request Lifecycle

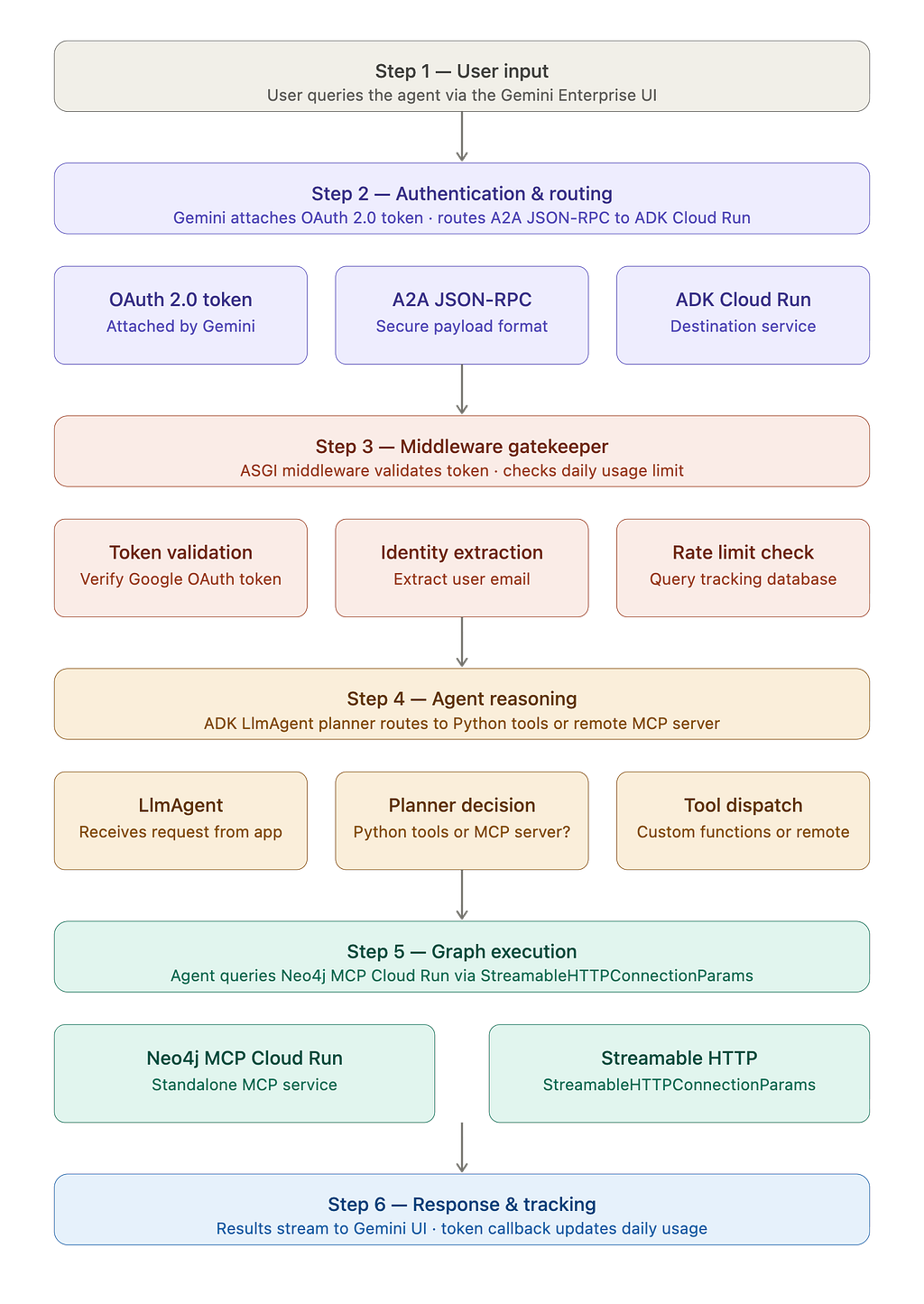

Understanding the journey of a user query is essential for maintaining system integrity. When a business user enters a prompt into the Gemini Enterprise UI, the following sequence occurs:

- Authentication: Gemini attaches a Google OAuth 2.0 Access Token to the request.

- Routing: The request is routed via an A2A JSON-RPC payload to the Cloud Run service.

- Validation: An Asynchronous Server Gateway Interface (ASGI) middleware intercepts the request to validate the user’s identity and check their authorization status.

- Reasoning: The ADK agent analyzes the query. If the user asks about "investments," the agent prioritizes specialized custom tools; if the query is general, it utilizes the remote MCP server.

- Execution and Feedback: The agent queries the Neo4j database, receives the data, and streams the response back to the user in real-time.

Security and Governance: The Enterprise Mandate

In an enterprise setting, security cannot be an afterthought. The Neo4j graph agent architecture incorporates multiple layers of protection to ensure data sovereignty and prevent system abuse.

OAuth 2.0 and ASGI Middleware

Access control is managed at the edge. The system utilizes custom ASGI middleware that validates incoming Google Bearer tokens against Google’s official UserInfo endpoints. This ensures that only authenticated employees within the organization’s domain can interact with the agent. This "gatekeeper" logic extracts user emails and identities, providing a clear audit trail for every query processed by the LLM.

Semantic Guardrails and Injection Defense

One of the most significant risks in GenAI deployment is prompt injection—where a user attempts to trick the AI into revealing system prompts or executing unauthorized database commands. To counter this, the framework implements OWASP-aligned guardrails. These include pattern-matching filters that block keywords like "ignore previous instructions" or "drop database." Furthermore, the system analyzes the ratio of special characters in a query to detect potential "buffer attacks" or attempts to confuse the tokenizer.

Granular Cost Control and Token Management

Generative AI operations incur costs that can become unpredictable if left unmonitored. To provide IT managers with "Granular Cost Control," the architecture includes a dedicated Token Management system.

This system uses a secondary Neo4j database specifically to track real-time token usage per user. By extracting metrics via ADK callbacks, the TokenManager can enforce daily quotas. If a user exceeds their allocated limit, the middleware intercepts the request before it reaches the expensive LLM processing stage, returning a polite notification that the daily limit has been reached. This prevents "runaway" costs and ensures that AI resources are distributed fairly across the organization.

Deployment and Scalability on Google Cloud Platform

The use of Google Cloud Run and Secret Manager is pivotal for the "production-ready" status of this agent. By containerizing the Python ADK application, organizations can leverage Google’s serverless infrastructure, which handles all underlying server management, including patching and scaling.

The deployment process involves:

- Secret Management: Storing Neo4j URIs, database credentials, and Google API keys in Google Secret Manager rather than hardcoding them in the application.

- Cloud Run Deployment: Launching the MCP server and the ADK agent as separate services. The MCP server is typically configured for read-only access by default, providing an additional layer of data protection.

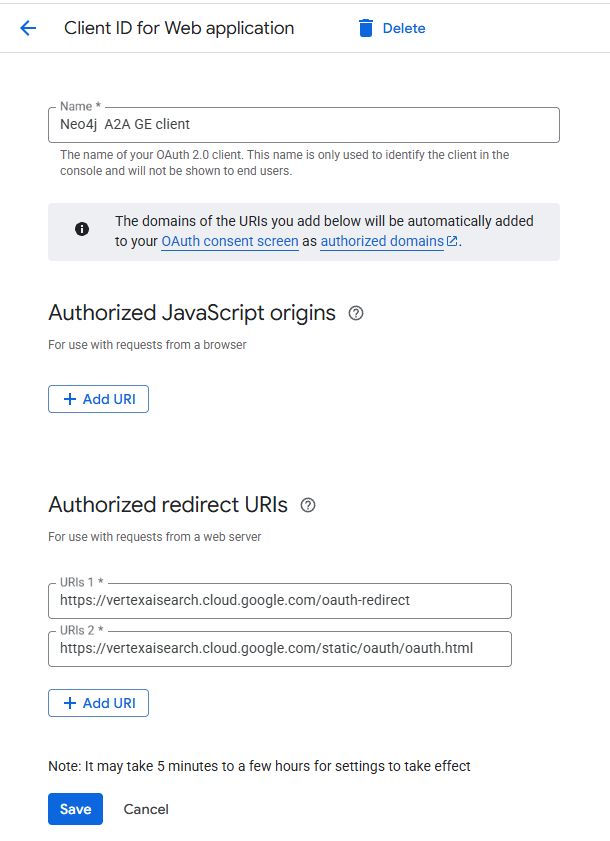

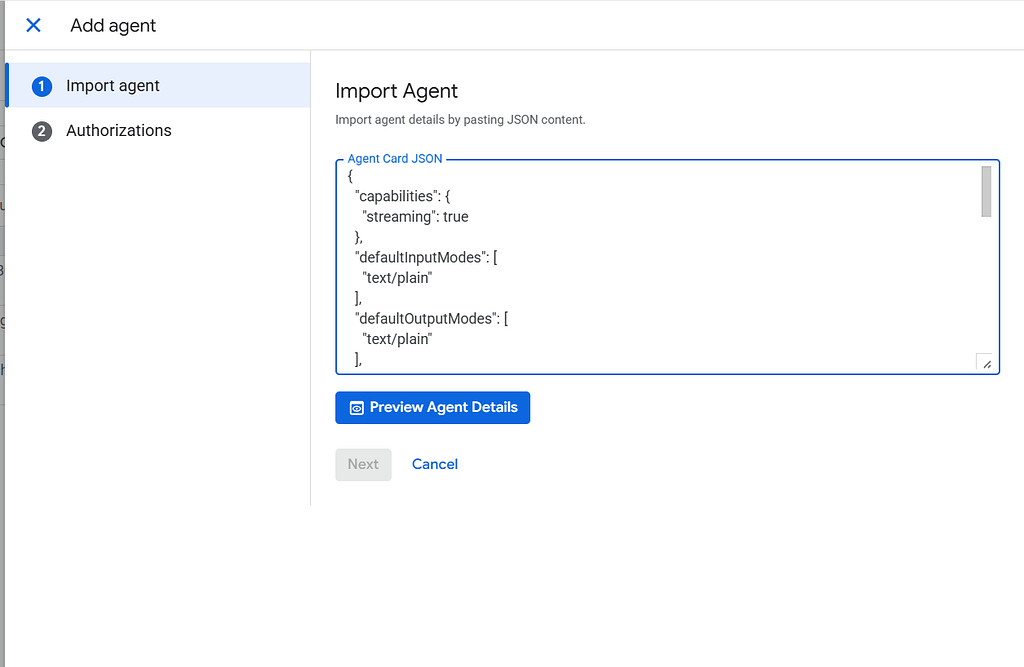

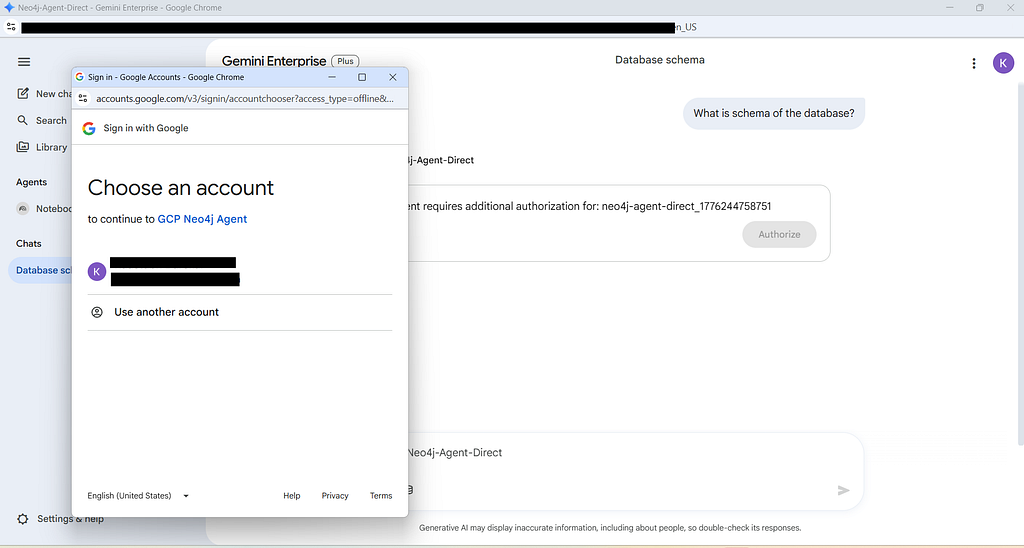

- Gemini Registration: The final step involves registering the Cloud Run service URL within the Gemini Enterprise "Agents" sidebar. Because the application uses the

A2AStarletteApplicationframework, it automatically generates the required JSON-RPC discovery files and Agent Cards needed for Gemini to recognize its capabilities.

Broader Impact and Industry Implications

The ability to democratize access to knowledge graphs has profound implications for various sectors. In finance, analysts can use natural language to trace "hidden" relationships between entities in anti-money laundering (AML) investigations. In healthcare, researchers can query complex interactions between proteins, diseases, and drugs without needing to write Cypher (Neo4j’s query language).

Market analysts suggest that the integration of Graph Technology with Generative AI—often referred to as GraphRAG (Graph-based Retrieval-Augmented Generation)—is set to become the standard for enterprise AI. According to industry data, organizations using graph-based context in their AI models report up to a 30% increase in accuracy and a significant reduction in hallucination rates compared to standard vector-only approaches.

Conclusion: The Future of the Agentic Enterprise

By leveraging a fully decoupled microservices architecture on GCP, Neo4j and Google have provided a blueprint for the "Agentic Enterprise." This system does not just provide a chat interface; it provides a secure, observable, and cost-controlled pipeline that turns complex data into actionable business intelligence.

As organizations continue to move away from local AI experiments toward global deployments, the emphasis on security middleware, token tracking, and protocol standardization (MCP/A2A) will be the deciding factor in the success of their AI strategies. This architecture ensures that the power of the organizational knowledge graph is no longer confined to data scientists but is available to every business user with a question.