Enhancing Data Science Workflows: The Power of AI Skills for Automation and Efficiency

The realm of data science is undergoing a profound transformation, driven by the increasing sophistication of Artificial Intelligence, particularly Large Language Models (LLMs). While initial applications focused heavily on code generation, the industry is rapidly moving towards integrating AI across the entire data science workflow, a shift exemplified by the adoption of modular AI "skills." These skills represent a crucial evolution in how AI interacts with and optimizes complex, repetitive tasks, moving beyond simple prompting to offer a more reliable, consistent, and scalable approach to data analysis and visualization.

The Rise of Modular AI in Data Science

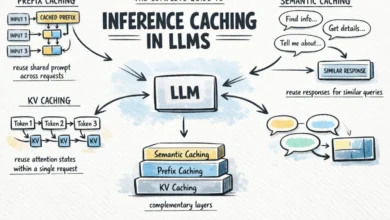

The concept of an AI skill is fundamentally about reusability and structured execution. A skill is defined as a self-contained package of instructions, often accompanied by supporting files such as scripts, templates, and examples. Its primary purpose is to empower AI to execute recurring workflows with enhanced reliability and consistency. At its core, each skill requires a SKILL.md file, which serves as a manifest containing essential metadata like its name, a detailed description, and explicit instructions on its operational mechanics. This structured approach contrasts sharply with the traditional method of embedding all instructions directly within the LLM’s primary context window, such as in Claude Code or Codex.

The strategic advantage of employing skills lies in their ability to manage the LLM’s context window more efficiently. Initially, the AI system only needs to load the lightweight metadata associated with a skill. The comprehensive instructions and bundled resources are then accessed dynamically, only when the AI determines that a particular skill is relevant and necessary for the task at hand. This on-demand loading mechanism prevents the main context from becoming overly cumbersome, improving processing speed and reducing computational overhead. The growing recognition of this modular approach is evident in the emergence of public repositories like skills.sh, which curate and share a diverse collection of pre-built AI skills for various applications.

Navigating the Complexities of Data Science Workflows

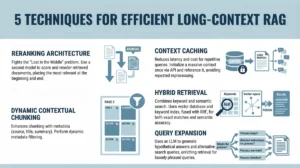

Data scientists frequently encounter workflows that, while critical, are characterized by their repetitive nature, semi-structured processes, and heavy reliance on domain-specific knowledge. These tasks often prove challenging to manage effectively with a single, monolithic prompt, highlighting the need for more sophisticated AI integration. Examples include data cleaning and preprocessing, feature engineering, model selection and training, and perhaps most visibly, data visualization.

Historically, data professionals have spent a significant portion of their time on these routine operations. According to various industry reports, data scientists can spend anywhere from 40% to 60% of their time on data preparation and cleaning alone, tasks that, while foundational, detract from higher-level analytical and strategic work. The advent of LLMs offered a glimpse into automating code generation, but the true potential lies in automating entire processes, not just isolated code snippets. This is where AI skills step in, providing a framework for robust, repeatable automation.

A Practical Application: Automating Weekly Data Visualization

To illustrate the tangible benefits of AI skills, consider a compelling case study: the automation of a weekly data visualization routine. A data professional, maintaining a consistent practice of creating one data visualization per week since 2018, recognized the inherent repetitiveness and time commitment—approximately one hour weekly—of this process. This made it an ideal candidate for automation through AI skills.

The manual workflow for this weekly visualization typically involved several steps:

- Dataset Search: Identifying a novel dataset from various public sources.

- Data Ingestion and Exploration: Loading the data, understanding its structure, and performing initial exploratory analysis.

- Visualization Design and Implementation: Selecting appropriate chart types, designing the visual aesthetic, and writing code to generate the visualization.

- Storytelling and Contextualization: Crafting a compelling narrative around the insights derived from the visualization, including headlines, descriptions, and caveats.

- Publishing: Preparing the visualization and its accompanying narrative for publication on a blog or platform.

With the integration of AI skills, this workflow was significantly streamlined. While the initial dataset search often remains a manual, exploratory step, subsequent stages can be largely automated. For this specific project, two distinct skills were developed: one focused on generating the visualization itself (the "storytelling-viz" skill) and another for publishing the final output.

Using the storytelling-viz skill within an environment like Codex Desktop, the data professional could query data from a database (e.g., Google BigQuery, using a Model-as-Code Platform, or MCP, for seamless external tool access) and instruct the AI to generate a visualization. An exemplary outcome involved analyzing an Apple Health dataset, where the AI successfully surfaced an insight concerning annual exercise time versus calories burned. Crucially, the AI not only identified the insight but also recommended a suitable chart type, complete with detailed reasoning and an assessment of trade-offs, all within minutes. The output featured an insight-driven headline, a clean interactive visualization, pertinent caveats, and the data source, reducing a laborious one-hour task to less than ten minutes. This dramatically improved efficiency allows data scientists to dedicate more time to critical thinking, interpretation, and strategic decision-making.

The Iterative Development of Robust AI Skills

Building effective AI skills is not a one-time event but an iterative process of planning, testing, and refinement. The journey from a conceptual idea to a fully functional skill involves several key stages, often assisted by the very AI it seeks to empower.

1. Strategic Planning with AI: The initial phase involves clearly defining the problem and desired outcome. By describing the existing manual workflow and the automation goals to an LLM, the AI can help outline the technical stack, requirements, and characteristics of a "good" output. Intriguingly, LLMs like Claude Code or Codex can even bootstrap the initial version of a SKILL.md file, effectively triggering a "skill to create a skill." This demonstrates the meta-capabilities of advanced AI in facilitating its own deployment.

2. Iterative Testing and Refinement: The first iteration of a skill rarely achieves perfection. For instance, the initial version of the visualization skill might generate visualizations, but with suboptimal chart types, inconsistent visual styles, or a failure to adequately highlight key takeaways. Bridging this gap between initial functionality and ideal performance necessitates iterative improvements, drawing on multiple strategies:

- Leveraging Personal Expertise: Data professionals often possess years of accumulated knowledge, best practices, and aesthetic preferences. By feeding the AI examples of past visualizations along with explicit style guidelines, the AI can learn to summarize these principles and update the skill’s instructions accordingly. This ensures the automated output aligns with established quality standards and brand consistency.

- Integrating External Research: To enhance scalability and robustness, AI can be tasked with researching external resources. This includes consulting well-known data visualization design principles from reputable sources (e.g., Edward Tufte, Stephen Few) or analyzing similar public skills. This broadens the skill’s knowledge base beyond the user’s explicit documentation, introducing perspectives and strategies that might not have been initially considered.

- Learning from Extensive Testing: Rigorous testing across diverse datasets is paramount. By applying the skill to numerous varied datasets, developers can observe its behavior, evaluate the quality of its output against human-generated alternatives, and identify concrete areas for improvement. This might reveal issues such as a lack of clear data source attribution, inconsistent font usage, or an inability to adapt to different data scales. Each identified shortcoming provides an opportunity to refine the skill’s instructions, add new conditional logic, or incorporate specific error handling mechanisms. For example, testing might lead to updates ensuring consistent branding, appropriate handling of data sources in captions, or dynamic font sizing based on chart complexity.

Broader Implications for the Data Science Landscape

The successful implementation of AI skills, as demonstrated by the visualization automation project, holds significant implications for the broader data science community:

When AI Skills are Most Beneficial: AI skills are particularly valuable for recurring data science tasks that exhibit specific characteristics:

- Repetitive Nature: Tasks performed frequently, often on a schedule.

- Semi-structured Process: Workflows that follow a general pattern but require some degree of flexibility or adaptation.

- Domain Knowledge Dependence: Tasks that benefit from specialized expertise or best practices.

- Complexity Beyond Single Prompts: Workflows too intricate or multi-staged to be effectively managed by a single, simple LLM prompt.

This includes tasks such as automated report generation, anomaly detection pipelines, A/B testing analysis, or even routine model monitoring and retraining.

The Power of Modularity: A crucial takeaway is the importance of breaking down complex workflows into independent, reusable components. For instance, separating the visualization generation skill from a publishing skill enhances modularity. This not only makes each component easier to develop and maintain but also increases its reusability across different workflows. A visualization skill developed for a weekly blog might also be leveraged for internal dashboards or client presentations, maximizing efficiency.

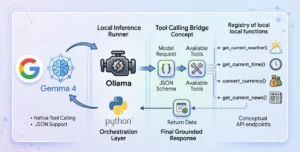

Synergy with Other AI Tools: AI skills are not designed to operate in isolation. They integrate seamlessly with other AI-driven platforms, such as Model-as-Code Platforms (MCPs). The ability to combine a BigQuery MCP with a visualization skill in a single command exemplifies this synergy. MCPs facilitate smooth access to external tools and data sources, while skills provide the structured process for a given task. This powerful combination allows AI models to not only access necessary external data and tools but also to execute complex, multi-step operations with precision and adherence to predefined protocols. This holistic approach ensures that AI is not just a tool for isolated tasks but an integral orchestrator of the entire data science pipeline.

The Human Element in the Age of AI

Despite the remarkable capabilities of AI in automating up to 80% of repetitive data science tasks, the human element remains irreplaceable. The data professional’s continued engagement in their weekly visualization project, even with significant automation, underscores this point. What began in 2018 as a technical exercise to practice Tableau evolved into a ritual focused on discovery, intuition, and storytelling.

In an AI-augmented future, the data scientist’s role shifts from rote execution to higher-order functions:

- Curiosity and Exploration: Identifying novel datasets and framing insightful questions.

- Data Intuition and Storytelling: Interpreting AI-generated insights, refining narratives, and ensuring ethical and contextual understanding.

- Critical Evaluation: Overseeing AI outputs, identifying biases, and validating findings.

- Skill Development and Refinement: Designing, testing, and continuously improving the AI skills themselves.

The process of discovery, the sharpening of data intuition, and the unique human ability to see the world through the lens of data are aspects that AI currently complements rather than replaces. The evolution of AI skills ensures that data professionals can offload the monotonous, allowing them to focus on the truly creative, strategic, and profoundly human aspects of their discipline. This symbiotic relationship promises not only increased efficiency but also a deeper, more insightful engagement with data in the years to come.