llms

-

Artificial Intelligence

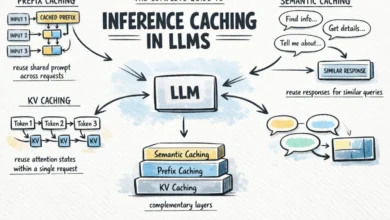

The Complete Guide to Inference Caching in LLMs

The rapidly evolving landscape of large language models (LLMs) has introduced unprecedented capabilities, but also significant challenges related to operational…

Read More »