large

-

Cloud Computing

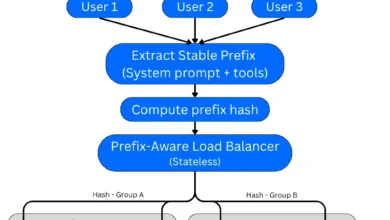

Unlocking Massive Savings and Speed: Advanced Prompt Caching Architectures for Large Language Model Inference

Prompt caching, a sophisticated technique designed to significantly reduce the cost and latency of large language model (LLM) inference, has…

Read More » -

Cloud Computing

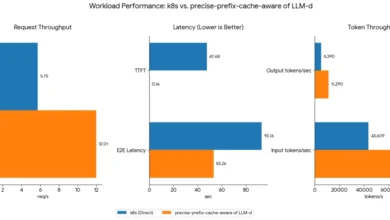

Optimizing Large Language Model Serving: Beyond Traditional Load Balancing

The landscape of artificial intelligence is rapidly evolving, with Large Language Models (LLMs) at the forefront of this transformation. As…

Read More »