Meta Revolutionizes Software Quality with Just-in-Time AI-Driven Testing, Boosting Bug Detection Fourfold

Meta has unveiled a groundbreaking Just-in-Time (JiT) testing approach that dynamically generates tests during the code review process, marking a significant departure from traditional, manually maintained test suites. This innovation, detailed in Meta’s engineering blog and accompanying research, demonstrates a substantial improvement in software quality, reporting approximately a fourfold increase in bug detection within AI-assisted development environments. The shift signals a fundamental re-evaluation of software testing methodologies, driven by the escalating influence of artificial intelligence in code generation and modification.

The Evolving Landscape of Software Development and Traditional Testing’s Limitations

For decades, software development has relied heavily on comprehensive test suites, meticulously crafted and maintained by human developers and quality assurance (QA) engineers. These traditional tests—comprising unit, integration, and end-to-end tests—are designed to validate functionality, prevent regressions, and ensure code quality before deployment. While effective in stable environments with predictable development cycles, this paradigm faces increasing strain in the modern era of rapid iteration and, more critically, the proliferation of AI-assisted development.

The digital transformation across industries has propelled software development into an era of unprecedented speed and complexity. Companies like Meta operate at an immense scale, pushing millions of lines of code daily across vast, interconnected systems. In such environments, the overhead associated with maintaining extensive, long-lived test suites becomes a significant bottleneck. Test suites can become brittle, assertions can quickly become outdated, and test coverage often struggles to keep pace with the sheer volume and velocity of code changes. This challenge is further exacerbated by the rise of "agentic workflows," where AI systems autonomously generate, refactor, or modify large portions of code.

These AI agents, often powered by sophisticated Large Language Models (LLMs), can produce code at a speed and scale that far outstrips human capacity for manual review and test maintenance. While AI-generated code promises faster development cycles and reduced human error in routine tasks, it also introduces new complexities for quality assurance. The "black box" nature of some AI outputs, coupled with their ability to introduce subtle yet significant behavioral changes, makes traditional static test suites increasingly ineffective. These suites are often built on assumptions about human-driven code evolution and may not adequately anticipate the types of issues introduced by AI agents. The result is a higher maintenance burden, reduced test effectiveness, and an increased risk of critical bugs slipping into production.

Ankit K., an ICT Systems Test Engineer, succinctly captured this emerging reality, observing, "AI generating code and tests faster than humans can maintain them makes JiT testing almost inevitable." His statement underscores the necessity of an adaptive testing strategy that can dynamically respond to the unique demands of AI-driven development.

Just-in-Time Testing: A Paradigm Shift

Meta’s JiT testing approach directly addresses these challenges by fundamentally rethinking when and how tests are generated and executed. Instead of relying on pre-existing, static test suites, JiT testing focuses on generating highly targeted tests at the precise moment they are needed: during the pull request (PR) or code review stage. This dynamic generation is based on the specific code changes—or "diffs"—proposed in the PR, ensuring that tests are always relevant to the current modification.

The core philosophy of JiT testing is a shift from "hardening" tests that merely confirm existing functionality to "catching" tests designed to proactively identify regressions and potential failures in new or modified code. Mark Harman, a Research Scientist at Meta, articulated this profound change, noting, "This work represents a fundamental shift from ‘hardening’ tests that pass today to ‘catching’ tests that find tomorrow’s bugs." This proactive stance is crucial for maintaining code quality in fast-evolving, AI-assisted development environments.

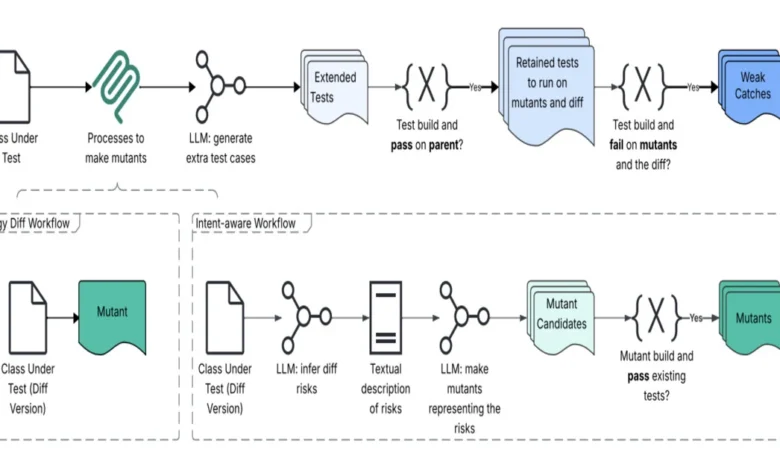

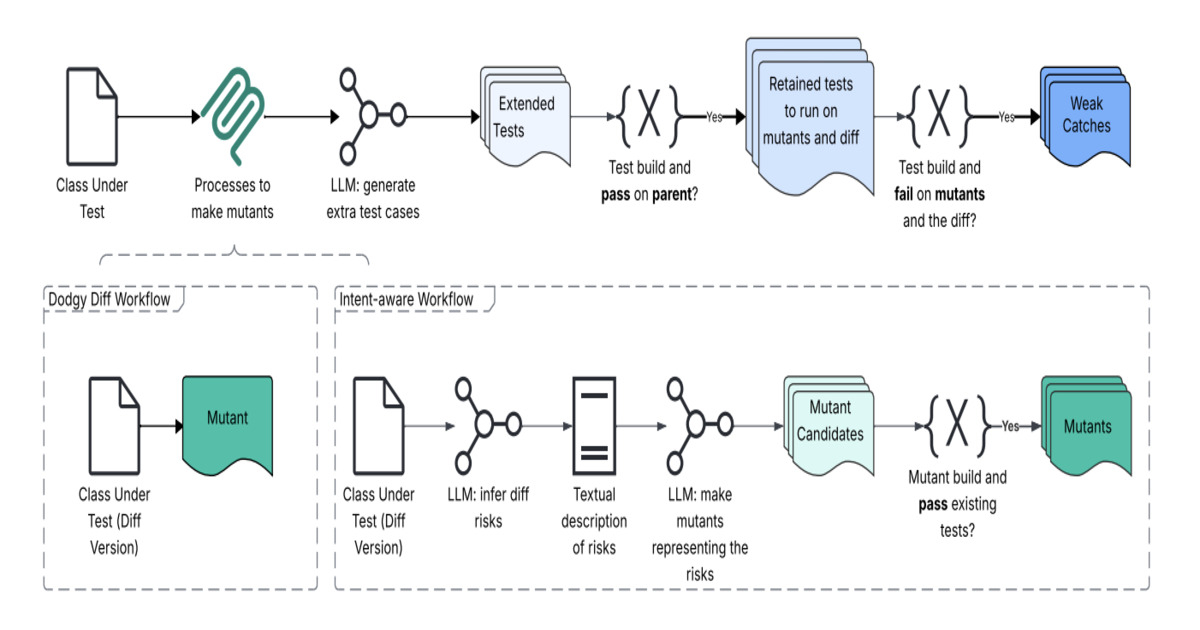

The Technical Architecture: Dodgy Diff and Intent-Aware Workflows

At the heart of Meta’s JiT testing system lies an innovative architecture known as "Dodgy Diff" and its associated intent-aware workflow. This system moves beyond merely analyzing textual code differences; it reinterprets a code change as a semantic signal, extracting the underlying behavioral intent and identifying potential risk areas.

The process unfolds through a sophisticated pipeline:

- Intent Reconstruction and Change-Risk Modeling: When a developer submits a pull request, the Dodgy Diff system first analyzes the proposed code changes. It doesn’t just look at what lines were added or removed but attempts to infer the developer’s intent behind those changes. Concurrently, it performs a change-risk modeling analysis, identifying areas of the codebase that are most susceptible to breakage as a result of the proposed modification. This stage is crucial for understanding what could go wrong rather than just what has changed.

- Mutation Engine and Synthetic Defects: These signals—the inferred intent and identified risk areas—then feed into a specialized mutation engine. This engine generates "dodgy" variants of the code by injecting synthetic defects. Mutation testing, a technique that has been confined largely to academic research for decades, experiences a powerful revival here. By deliberately introducing small, realistic faults into the code, the system creates scenarios that simulate real-world bugs. These "mutants" serve as a ground truth for evaluating the effectiveness of the generated tests. If a test can detect a known synthetic defect, it’s more likely to catch a real, unknown bug.

- LLM-based Test Synthesis: With the identified intent and potential failure modes in hand, a Large Language Model (LLM)-based test synthesis layer takes over. Leveraging its understanding of programming languages, common patterns, and potentially even project-specific code conventions, the LLM generates targeted test cases. These tests are specifically designed to fail if the proposed code changes introduce regressions or unexpected behavior, particularly in the areas identified as high-risk by the change-risk modeling.

- Filtering and Prioritization: The initial set of generated tests may include noisy or low-value tests. A subsequent filtering stage prunes these less effective tests, ensuring that only high-signal, meaningful tests are presented to the developer during the pull request review. This prevents alert fatigue and focuses human attention on genuinely problematic areas.

- Integration into Code Review: Finally, the results—the targeted JiT tests and any failures they uncover—are surfaced directly within the pull request interface. This immediate feedback loop allows developers to address potential issues before the code is merged, significantly shifting fault detection "left" in the development lifecycle.

The architecture emphasizes a deep understanding of the code’s semantics and the impact of changes, rather than a superficial textual analysis. This holistic approach allows the system to construct "regression-catching tests" that are designed to fail on the proposed changes if they introduce a bug but pass on the parent revision (the code before the changes), thus isolating the issue to the specific modification.

Empirical Evidence and Impact

Meta’s extensive evaluation of the JiT testing system underscores its transformative potential. The system was tested on over 22,000 generated tests, yielding compelling results. The research indicated a remarkable fourfold improvement in bug detection compared to baseline-generated tests. More impressively, the system showed up to a 20x improvement in detecting meaningful failures versus coincidental outcomes, highlighting its precision and ability to identify genuinely impactful issues.

In one specific evaluation subset, the JiT system identified 41 distinct issues. Out of these, 8 were definitively confirmed as real defects, including several that carried the potential for significant production impact had they gone undetected. This empirical validation demonstrates the system’s practical efficacy in a real-world, large-scale development environment.

These findings are particularly significant in an industry where the cost of bugs increases exponentially the later they are detected in the development lifecycle. A bug found in production can cost hundreds or thousands of times more to fix than one caught during the code review stage. By catching critical defects earlier and more consistently, Meta’s JiT testing can lead to substantial savings in engineering effort, improved system stability, and enhanced user experience.

The Revival of Mutation Testing

The success of Meta’s JiT testing also marks a significant milestone for mutation testing. As Mark Harman further emphasized in a LinkedIn post, "Mutation testing, after decades of purely intellectual impact, confined to academic circles, is finally breaking out into industry and transforming practical, scalable Software Testing 2.0." This technique, which involves systematically introducing small changes (mutations) into a program’s source code to create faulty versions, has historically been computationally expensive and difficult to scale. However, with advancements in computational power, static analysis, and particularly the integration with LLMs, mutation testing is now proving its immense value in real-world industrial applications. It provides a robust mechanism to assess the quality and completeness of test suites, ensuring they are capable of detecting a wide range of defects.

Broader Implications and the Future of Software Quality Assurance

The introduction of JiT testing by Meta represents more than just a technological upgrade; it signals a profound philosophical shift in how software quality assurance will be approached in the age of AI.

- Shift from Maintenance to Proactive Detection: The emphasis moves away from the laborious and often reactive task of maintaining vast, static test suites to a dynamic, proactive model of change-specific fault detection. This reduces the burden of brittle test suites that constantly need updating as code evolves.

- Augmenting, Not Replacing, Human Developers: While the system automates test generation, human review remains critical. However, the role of the human developer evolves. Instead of manually writing and debugging extensive test cases, developers are presented with highly curated, meaningful issues by the JiT system. Their expertise is then focused on analyzing these targeted insights and implementing the necessary fixes, making their time more impactful.

- Democratization of Advanced Testing Techniques: By embedding sophisticated techniques like program analysis, LLM-driven test synthesis, and mutation testing directly into the development workflow, Meta is making these advanced methods accessible and actionable for every developer, without requiring specialized expertise in each domain.

- The Evolving Role of QA: This innovation will likely reshape the role of quality assurance professionals. Rather than focusing solely on manual testing or basic test automation, QA engineers may increasingly focus on designing, optimizing, and monitoring the AI-driven testing systems themselves. Their expertise will be invaluable in refining the intent-aware models, improving mutation strategies, and ensuring the overall efficacy and reliability of the automated testing pipeline.

- Industry-Wide Adoption: As the benefits become clearer and the underlying technologies mature, it is highly probable that other large technology companies and eventually the broader software industry will explore and adopt similar JiT testing methodologies. The challenges Meta faced with scaling testing in AI-assisted development are universal to any organization embracing agentic workflows.

- Ethical Considerations and Bias: As AI plays a larger role in code generation and testing, considerations around bias in AI models, the potential for generating incomplete or flawed tests, and the need for robust oversight mechanisms will become increasingly important. The integrity of the testing system itself will need to be rigorously validated.

In conclusion, Meta’s Just-in-Time testing, powered by the Dodgy Diff architecture and advanced AI techniques, marks a pivotal moment in software engineering. By embracing dynamic, intent-aware test generation, Meta is not only improving its own software quality but also laying down a blueprint for how the industry can navigate the complexities and leverage the potential of AI-assisted development, ensuring robust, reliable software for the future. This move heralds a new era of "Software Testing 2.0," where intelligent automation empowers developers to deliver higher quality code with unprecedented efficiency.