AWS DevOps Agent: Revolutionizing Site Reliability Engineering with AI-Powered Operational Teammates

In an era defined by distributed architectures and microservices, Site Reliability Engineers (SREs) face an escalating battle against operational complexity. The challenge of maintaining application uptime and performance has intensified, with incidents often demanding hours of painstaking manual correlation across disparate logs, metrics, traces, and configuration histories. This persistent operational burden, which frequently pulls SREs into reactive "firefighting" during off-hours, underscores a critical industry need for intelligent, autonomous assistance. AWS has stepped into this breach with the introduction of the AWS DevOps Agent, an advanced AI-powered operational teammate designed to not only resolve but also proactively prevent incidents, optimize application reliability, and manage on-demand SRE tasks across diverse cloud and on-premise environments.

The Escalating Challenge for Site Reliability Engineers

Modern application landscapes are inherently intricate. A typical enterprise application might span hundreds or thousands of microservices, each with its own deployment pipeline, configuration, and telemetry streams. When a critical issue arises – perhaps a latency spike or an elevated error rate – the root cause is rarely singular or immediately obvious. SREs are traditionally tasked with the demanding process of manually sifting through gigabytes of logs, cross-referencing metrics from various monitoring tools, tracing dependencies across services, and piecing together a coherent hypothesis. This diagnostic journey, often initiated by a 2 AM pager alert, can routinely consume several hours, directly impacting Mean Time To Resolution (MTTR) and, consequently, business continuity and customer satisfaction. Industry data consistently highlights the significant financial implications of downtime, with estimates ranging from thousands to millions of dollars per hour, depending on the scale and nature of the business. The cognitive load on SRE teams is immense, leading to burnout and making it increasingly difficult to scale operations alongside the rapid evolution of cloud-native architectures.

Many organizations have attempted to leverage the power of Large Language Models (LLMs) to alleviate this burden, often by providing their favorite AI coding tools with access to operational data. While these "do-it-yourself" (DIY) approaches offer some value for straightforward scenarios, their limitations quickly become apparent in real-world, at-scale environments. Such rudimentary LLM wrappers often lack the deep contextual awareness needed to navigate complex application topologies, enforce granular governance and access controls, or retain learned intelligence from past incidents. The sheer volume and distributed nature of operational data across multiple accounts, monitoring systems, and code repositories present a formidable barrier, widening the gap between a simple AI assistant and a truly production-grade operational teammate.

AWS DevOps Agent: A Fully Managed, Autonomous Operational Teammate

Recognizing these profound challenges, AWS developed the DevOps Agent as a fully managed, AI-driven solution. Far more than a thin LLM wrapper, it represents a comprehensive agentic SRE paradigm, fundamentally shifting teams from a reactive firefighting posture to one of proactive, AI-driven operational excellence. The agent’s core strength lies in its ability to understand, analyze, and act autonomously, mirroring the capabilities of an experienced SRE but operating at machine speed and scale.

The DevOps Agent is engineered to provide continuous support, working across AWS, multi-cloud, and on-prem environments. Its capabilities span autonomous incident response, proactive incident prevention, application reliability and performance optimization, and the handling of on-demand SRE tasks. This sophisticated functionality is built upon several foundational pillars: topology intelligence, a three-tier skills hierarchy, cross-account investigation capabilities, and continuous learning mechanisms. These elements collectively enable the DevOps Agent to drastically reduce MTTR from hours to mere minutes, transforming the operational landscape for modern enterprises.

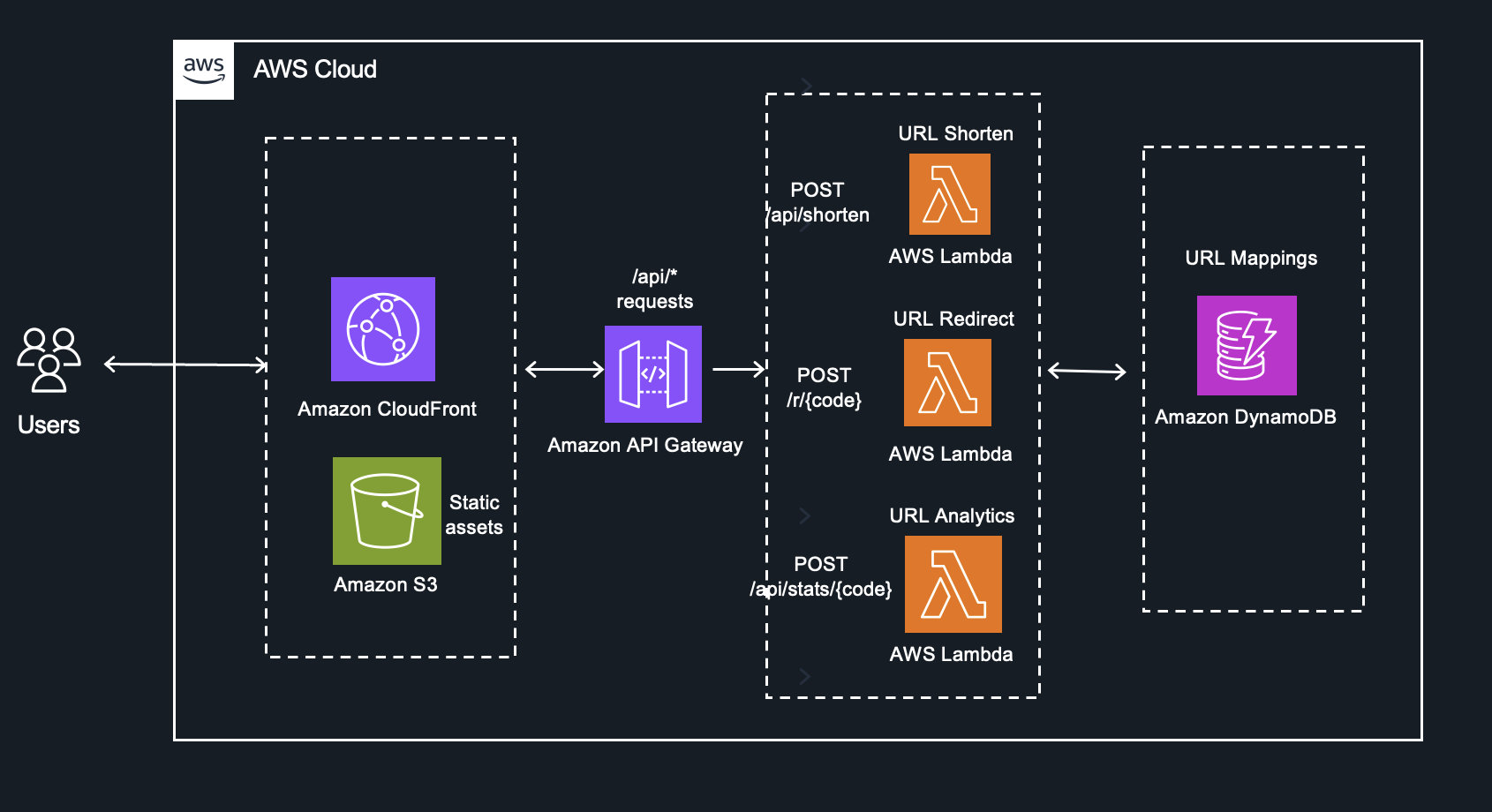

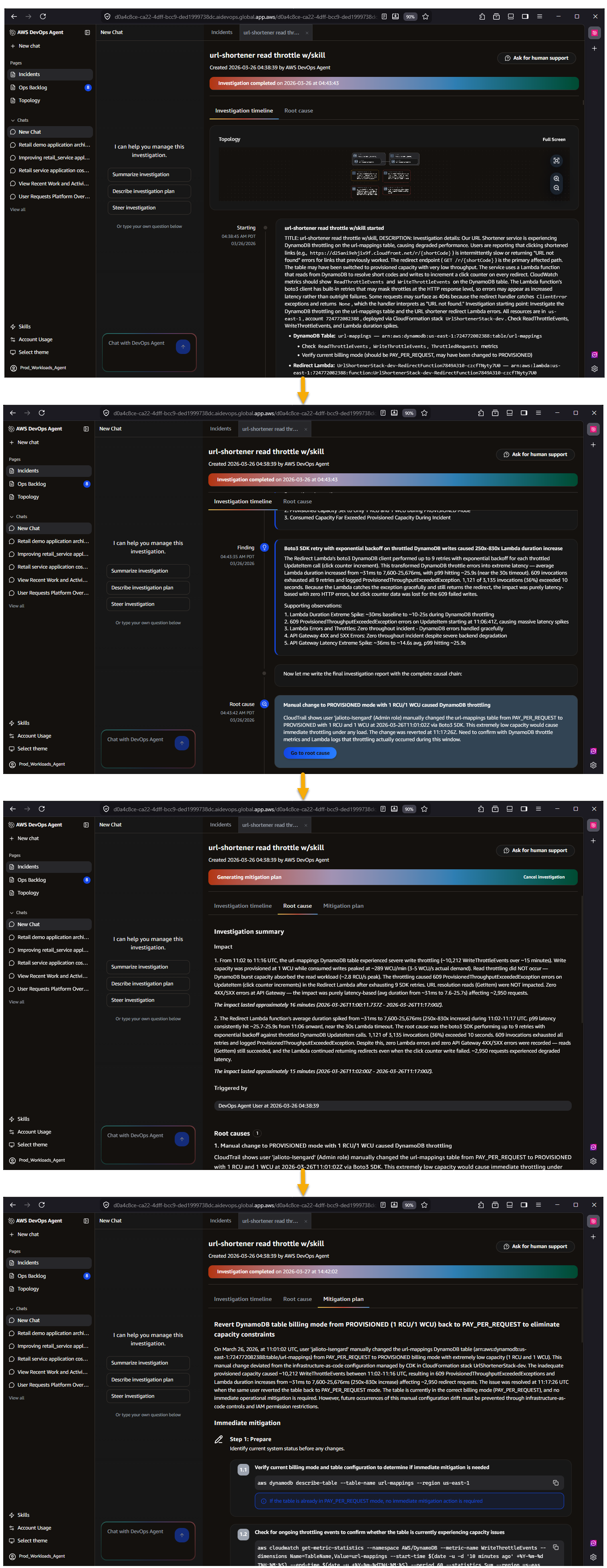

Consider a common scenario: a serverless URL shortener application hosted on AWS, utilizing a fully serverless architecture with AWS Lambda, DynamoDB, API Gateway, and CloudFront. While seemingly straightforward to build, troubleshooting a latency spike in such an environment is operationally complex. A slowdown in the "Redirect" Lambda function could be caused by DynamoDB throttling, a Lambda cold start regression, an API Gateway misconfiguration, or a CloudFront cache invalidation. Each potential root cause leaves signals scattered across different log groups, metric namespaces, and trace spans. This is precisely where the DevOps Agent demonstrates its unparalleled value as an autonomous operational teammate.

An Investigation in Action: Speed and Precision

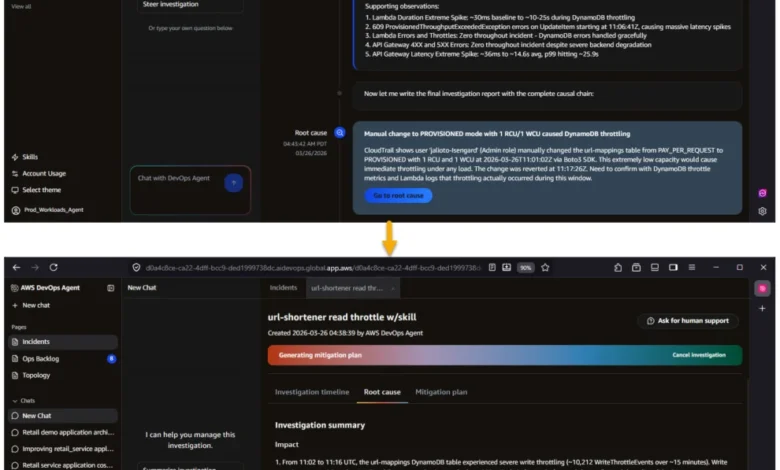

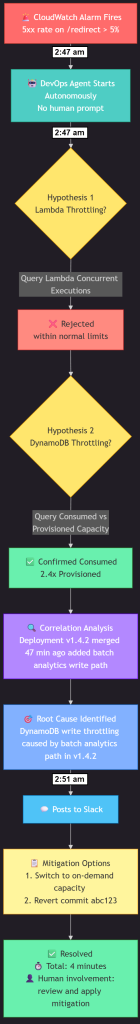

The power of the AWS DevOps Agent is perhaps best illustrated through its incident investigation workflow. When a CloudWatch alarm triggers due to elevated 5xx errors in a production environment, the agent springs into action without human intervention. Its process is systematic and intelligent:

- Autonomous Detection & Diagnosis: The agent receives the alarm, immediately recognizes an incident, and begins formulating hypotheses.

- Telemetry Correlation: It automatically correlates telemetry from various sources – logs, metrics, traces – across the entire application topology.

- Hypothesis Testing: The agent systematically tests its hypotheses, querying relevant data sources to validate or refute them. In the URL shortener example, it might investigate DynamoDB throughput, Lambda concurrency, and API Gateway logs.

- Root Cause Identification: Within minutes, the agent pinpoints the root cause. In one demonstration, it identified DynamoDB write throttling caused by a recent code deployment that introduced a batch write operation. This discovery, which could take a human SRE 30 minutes or more to correlate, was made in under 4 minutes.

- Actionable Recommendations: The DevOps Agent doesn’t just identify the problem; it provides a comprehensive root cause analysis with specific, actionable mitigation recommendations. This includes identifying the problematic commit and suggesting solutions like adjusting DynamoDB capacity or initiating a rollback.

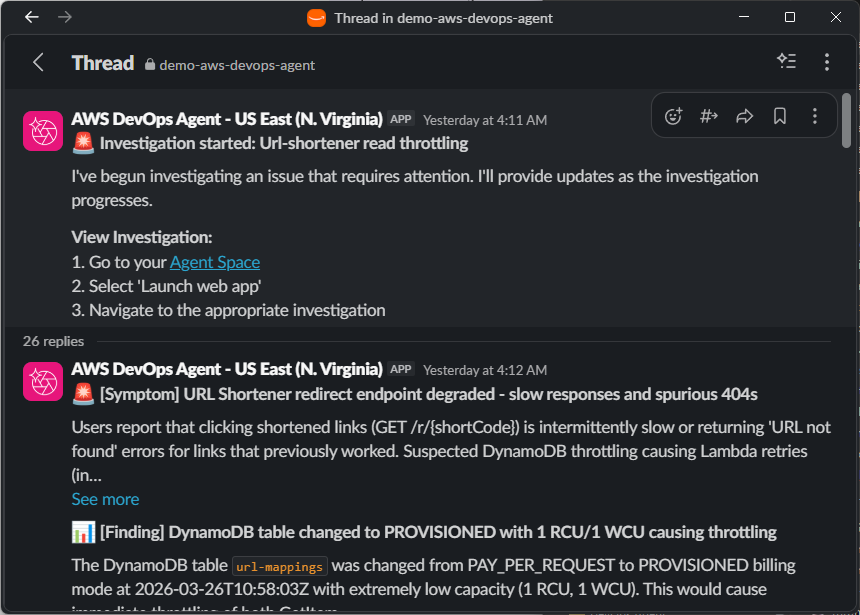

- Automated Communication: Crucially, the agent then autonomously posts a complete analysis to designated communication channels, such as Slack, ensuring that the relevant teams are informed with context and solutions before the on-call engineer has even finished reading their initial page.

This entire process, from initial alarm to actionable solution, is accomplished in under 5 minutes, a testament to the agent’s efficiency and depth of intelligence.

The Six Pillars of Differentiation: Why DevOps Agent Stands Apart

AWS DevOps Agent is not merely an interface layered over a generic large language model; it is built on Amazon Bedrock AgentCore with dedicated infrastructure for memory, policies, evaluations, and observability. Its distinctiveness is encapsulated in six key capabilities – the "6 Cs" – that collectively make it a fully functional next-generation operational teammate: Context, Control, Convenience, Collaboration, Continuous Learning, and Cost-Effective.

1. Context: Deep Operational Understanding

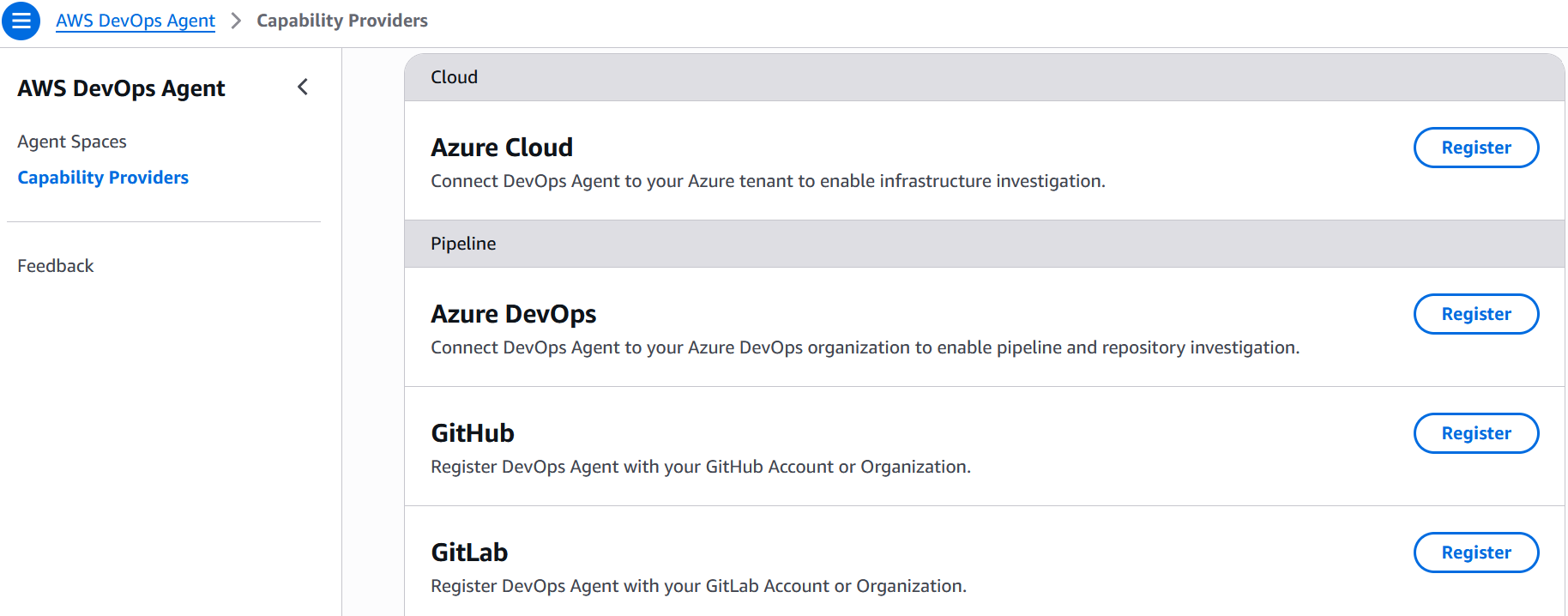

An LLM without operational context is akin to a doctor without a patient’s medical history – capable of generic advice but unable to diagnose complex issues. DevOps Agent addresses this through Agent Spaces, isolated logical containers that provide comprehensive, cross-account access to cloud resources, telemetry sources, code repositories, CI/CD pipelines, and ticketing systems. Within each Agent Space, the DevOps Agent dynamically builds an application resource topology by auto-discovering resources like containers, network components, log groups, alarms, and deployments, mapping their interconnections across AWS, Azure, and on-prem environments.

A background learning agent continuously analyzes infrastructure, telemetry, and code to generate an inferred topology at the application and service layer. This deep, AWS-native integration extends to services like Amazon Elastic Kubernetes Service (EKS), offering introspection into Kubernetes clusters, pod logs, and cluster events, even in private environments – capabilities that often require privileged access beyond external tools. The agent not only understands the resource topology but also the telemetry, deployment timeline, and both infrastructure and application code. When it detects an anomaly, it automatically checks GitHub, GitLab, and Azure DevOps for recent merges, correlating deployment timestamps with metric anomalies to determine if a code change is the probable cause. For instance, in the URL shortener example, the agent quickly identifies that a commit introducing batch DynamoDB writes was deployed just 47 minutes before throttling began – a correlation that would typically take a human SRE significant manual effort to uncover.

In the URL shortener scenario, the DevOps Agent maps the intricate dependency chain from CloudFront through API Gateway to each Lambda function and down to the DynamoDB table. When a latency spike impacts the URL Redirect function, the agent leverages this relationship graph to swiftly determine the root cause, whether it’s DynamoDB read throttling, a Lambda concurrency limit, or an API Gateway timeout configuration. This involves correlating CloudWatch metrics, Lambda traces, and DynamoDB consumed capacity in a single, unified investigation.

2. Control: Governance and Security at Scale

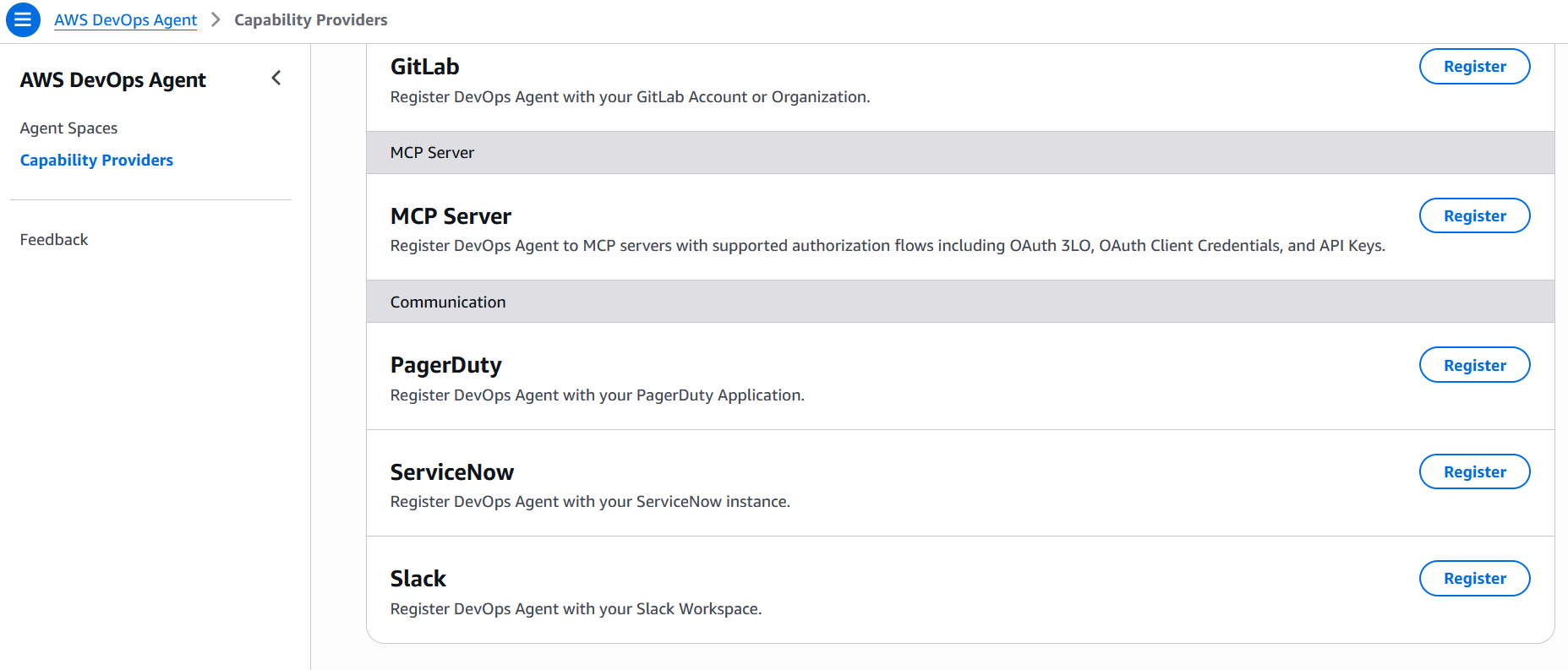

Operational context, without robust governance, introduces significant risk. Agent Spaces provide centralized control over what the agent can access and how it operates. Administrators meticulously define which AWS and Azure accounts, telemetry and code integrations, and MCP servers are available within each Agent Space using granular IAM permissions. This structured approach eliminates the inconsistency inherent in individual developers configuring their own toolchains, ensuring a uniform and secure operational environment across the team and simplifying onboarding for new members.

Furthermore, every reasoning step and action taken by the agent is meticulously logged in immutable audit journals. The agent cannot modify these records after recording, providing complete transparency into its decision-making process. AWS DevOps Agent is secured by design, integrating with AWS CloudTrail, leveraging IAM Identity Center authentication with granular permissions, and enforcing Agent Space-level data governance to isolate investigation data and respect organizational security configurations from day one.

For the URL shortener team, the administrator configures a single Agent Space with precisely defined read access to the production account’s CloudWatch logs, DynamoDB table metrics, the GitHub repository, and the Slack channel for incident coordination. Every SRE on the team benefits from this consistent, controlled configuration, eliminating the need for individual setup or the risk of misconfigurations.

3. Convenience: Zero-Setup Access for All

One of the most compelling advantages of the DevOps Agent is the immediate, zero-setup access it provides to every developer and SRE once an Agent Space is configured. This includes full operational context – topology, telemetry, code repositories, and ticketing integrations – without any individual configuration effort. This stands in stark contrast to the DIY alternative, where each engineer must individually connect their coding agent to various Model Context Protocol (MCP) servers for CloudWatch, their observability tool, source repository, and ticketing system. In practice, this often leads to inconsistent tooling, partial configurations, and a significant onboarding burden for new hires.

With AWS DevOps Agent, the administrator configures the Agent Space once. Engineers then simply log in to the Operator Web App or interact via Slack – using the tools they already prefer. The agent provides context-aware responses, maintains conversation history, and supports natural language queries against the application topology, all without any per-user setup. This streamlines workflows and empowers teams to focus on problem-solving rather than tool configuration.

For the URL shortener team, a new SRE joining the on-call rotation avoids spending a day wiring up access to the three Lambda function log groups, the DynamoDB metrics dashboard, and the GitHub repository. They can log into the Agent Space and immediately ask, "Show me all Lambda functions connected to this DynamoDB table," confident that the topology, telemetry access, and code context are already seamlessly integrated and available.

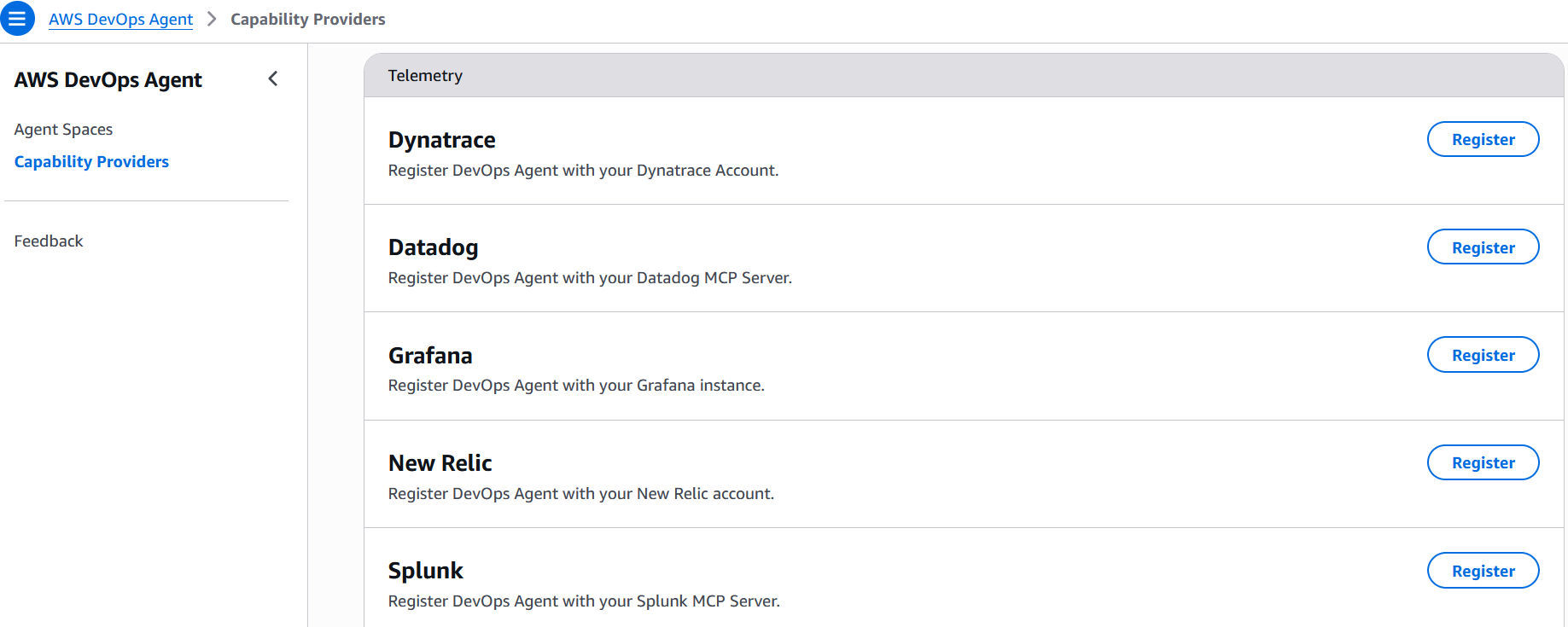

4. Collaboration: An Autonomous, Proactive Teammate

DevOps Agent transcends the role of a passive Q&A tool; it functions as an autonomous, collaborative teammate. When an incident triggers – whether via a CloudWatch alarm, PagerDuty alert, Dynatrace Problem, ServiceNow ticket, or any other configured event source – the agent initiates an investigation immediately, without human prompting. It generates hypotheses, queries telemetry and code data sources to test them, and actively coordinates across collaboration channels. This includes posting investigation timelines in Slack, updating ServiceNow tickets, and routing findings to relevant stakeholders.

Its extensibility through MCP and built-in integrations with CloudWatch, Datadog, Dynatrace, New Rel