Designing for Agentic AI: Building Trust Through Strategic Transparency, Not Noise

The rapid evolution of artificial intelligence, particularly the rise of "agentic AI" systems capable of autonomous decision-making, has ushered in a new era of technological capability. However, this advancement simultaneously presents a critical challenge: ensuring transparency and building user trust. As AI agents increasingly handle complex tasks, from processing financial transactions to managing healthcare schedules, the need to understand their internal workings becomes paramount. This article explores structured methodologies, such as the Decision Node Audit and Impact/Risk Matrix, designed to pinpoint crucial moments for transparency, moving beyond the inadequate extremes of opaque "black box" systems and overwhelming "data dumps."

The Rise of Agentic AI and the Transparency Imperative

Agentic AI refers to systems that can understand high-level goals, break them down into sub-tasks, execute those tasks, and adapt their plans based on real-time feedback, often without constant human intervention. These systems are transforming industries, promising unprecedented efficiencies in areas like customer service, financial analysis, supply chain management, and content creation. The global AI market, valued at over $200 billion in 2023, is projected to grow exponentially, underscoring the pervasive integration of these technologies into daily operations and personal lives.

Yet, as AI systems become more autonomous, user anxiety about their actions has escalated. A 2023 PwC survey on AI adoption highlighted that 61% of consumers are concerned about the trustworthiness of AI, and 52% worry about its potential to make biased decisions. This apprehension stems from a fundamental lack of visibility into how these sophisticated algorithms arrive at their conclusions. Users are left to wonder: Did the AI follow all protocols? Did it consider all relevant data? Is its recommendation reliable? Without clear answers, trust erodes, leading to reduced adoption and potential misuse. This transparency imperative aligns with broader movements like Explainable AI (XAI) and regulatory frameworks such as the EU AI Act, which increasingly mandate clear communication about AI’s capabilities and limitations.

Navigating the Transparency Paradox: Beyond Black Boxes and Data Dumps

Historically, designers and developers have often defaulted to one of two extreme approaches when confronting the challenge of AI transparency. The "black box" approach, prioritizing simplicity and a clean interface, hides virtually all of the system’s internal processes. While seemingly user-friendly, this secrecy often leaves users feeling powerless and uncertain. Consider an insurance claim processing system: if an AI simply displays "Calculating Claim Status" for an extended period, users become frustrated, questioning whether their detailed submissions—like police reports containing mitigating circumstances—have been adequately reviewed. This opacity breeds distrust and can be detrimental in high-stakes scenarios.

Conversely, the "data dump" approach attempts to provide exhaustive transparency by streaming every log line, API call, and internal event to the user. This knee-jerk reaction to user anxiety, while well-intentioned, invariably leads to "notification blindness." Users are quickly overwhelmed by a constant torrent of irrelevant technical information, rendering the system’s promised efficiency moot. They tune out the noise until an error occurs, at which point they lack the context to diagnose or rectify the problem. Neither extreme adequately addresses the nuanced need for users to receive just enough information at just the right time to foster confidence and understanding.

The Decision Node Audit: A Blueprint for Clarity

To bridge this gap, a more thoughtful, structured approach is required. The "Decision Node Audit" emerges as a crucial methodology for designers and engineers to systematically map an AI system’s internal logic to its user interface. This process is not a style choice but a functional requirement, shifting the focus from "what should the UI look like?" to "what is the agent actually deciding?"

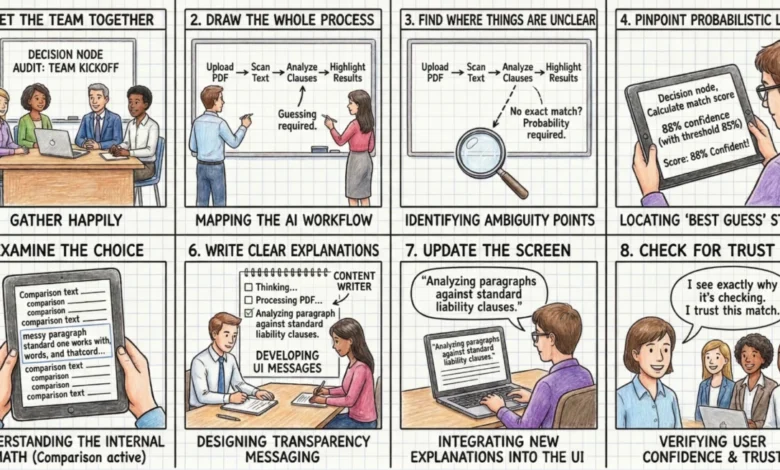

The audit begins by assembling a cross-functional team—including product owners, business analysts, designers, and critically, the engineers who built the AI. This collaborative setting is essential because engineers possess the intricate knowledge of the backend logic, enabling a granular mapping of the AI’s process. The team then meticulously documents every step the AI takes, from initial input to final output.

The core objective is to identify "ambiguity points" or "decision nodes"—moments where the AI stops following deterministic rules and instead makes a choice based on probability, estimation, or a confidence score. Unlike traditional software where "if A, then B" is a certainty, AI systems often operate on likelihoods (e.g., "A is probably the best choice, but it’s only 65% certain").

A compelling illustration comes from "Meridian," a fictional insurance company utilizing agentic AI for initial accident claims. Initially, their system presented a black box, simply showing "Calculating Claim Status." Following a Decision Node Audit, the team identified three distinct, probability-based steps:

- Assessing Vehicle Damage: The AI analyzed uploaded photos against a database of damage types and severity, assigning a probability score to potential repair costs.

- Reviewing Police Report for Mitigating Circumstances: The AI processed the textual report, identifying keywords and contextual information to assess liability and external factors.

- Verifying Policy Coverage: The system cross-referenced the claim details with the user’s policy, including deductibles and coverage limits.

By exposing these previously hidden steps, Meridian transformed a moment of anxiety into one of clarity. The UI was updated to dynamically display "Assessing Vehicle Damage," then "Reviewing Police Report," and finally "Verifying Policy Coverage." Although the processing time remained the same, user confidence was restored. They understood the AI’s complex workflow and knew precisely where to focus their attention if the final assessment seemed inaccurate. This shift demonstrates how explicit communication about internal workings can profoundly impact user perception.

Another case study involved a procurement agent designed to review vendor contracts and flag risks. Users were anxious about potential legal oversights. The audit revealed a key decision point where the AI checked liability terms against company rules. It was rarely a perfect match, requiring the AI to decide if a 90% match was "good enough." This probabilistic decision was exposed in the UI, changing the message from "Reviewing contracts" to "Liability clause varies from standard template. Analyzing risk level." This provided users with critical context, assuring them that specific legal aspects were being scrutinized and empowering them to delve deeper post-generation.

Prioritizing Information: The Impact/Risk Matrix

Once decision nodes are identified, not all warrant immediate display. An AI might make dozens of micro-decisions for a single complex task. Over-communicating these still risks notification blindness. This is where the "Impact/Risk Matrix" becomes invaluable. It allows teams to categorize decisions based on their stakes (low vs. high) and reversibility (reversible vs. irreversible), thereby prioritizing which transparency moments to surface.

- Low Stakes / Low Impact: These decisions can often be auto-executed with minimal UI feedback, perhaps a passive toast notification or a simple log entry. For instance, an AI automatically renaming a file based on a pattern.

- High Stakes / High Impact: These are critical. Consider a financial trading bot executing a $50,000 trade versus a $5 trade. Users expect the system to pause and show its work for high-value transactions. The matrix helps introduce a "Reviewing Logic" state for transactions exceeding a specific threshold, allowing user oversight before execution.

The reversibility of an action is a decisive factor in selecting the appropriate UI pattern:

- High Stakes & Irreversible: These demand an "Intent Preview." If an AI proposes to permanently delete a database, the system must pause, explain its intent, and require explicit user confirmation before execution.

- High Stakes & Reversible: For these, an "Action Audit & Undo" pattern suffices. If an AI-powered sales agent moves a lead to a different pipeline, it can do so autonomously, provided it immediately notifies the user and offers an easily accessible "Undo" button.

This rigorous categorization prevents "alert fatigue" by reserving high-friction interactions for truly critical, irreversible moments, while maintaining speed and efficiency for everything else.

Validating User Understanding: The "Wait, Why?" Test

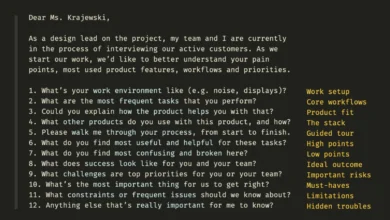

Identifying potential transparency nodes on a whiteboard is merely the first step; validation with actual human behavior is paramount. The "Wait, Why?" Test is a qualitative protocol designed to verify if the mapped transparency moments align with the user’s mental model.

During this test, users are asked to observe the AI completing a task while speaking their thoughts aloud. Any utterance like "Wait, why did it do that?", "Is it stuck?", or "Did it hear me?" signals user confusion and a potential lapse in transparency. For example, in a study for a healthcare scheduling assistant, participants consistently questioned, "Is it checking my calendar or the doctor’s?" during a four-second static screen. This revealed a missing transparency moment. The system needed to articulate two distinct steps: "Checking your availability" followed by "Syncing with provider schedule."

Crucially, transparency must connect the technical process to the user’s specific goal. A message like "Checking your availability" falls short because it lacks context. A better approach pairs the action with the outcome: "Checking your calendar to find open times" then "Syncing with the provider’s schedule to secure your appointment." This grounds the technical process in the user’s real-world objective, reducing anxiety and building confidence. For an AI managing cafe inventory, instead of "contacting vendor," a more effective message is "Evaluating alternative suppliers to maintain your Friday delivery schedule," directly addressing the manager’s concern about supply chain continuity.

Operationalizing Transparency: A Cross-Functional Mandate

Implementing strategic transparency requires a deeply integrated, cross-functional approach. Designers cannot work in isolation; they need to collaborate intimately with engineers and content strategists.

A "Logic Review" session with lead system designers and engineers is crucial. Designers present their mapped decision nodes, and engineers confirm the system’s technical capability to expose these specific states. Often, systems initially return generic "working" statuses, necessitating a push from designers for engineers to create specific technical hooks that signal precise state transitions (e.g., from "reading text" to "checking rules"). This negotiation ensures that the design is technically feasible.

Next, the Content Design team plays a vital role. While engineers provide the technical rationale, content designers translate this into clear, human-friendly explanations. A developer might propose "Executing function 402," which is technically accurate but meaningless to a user. A designer might suggest "Thinking," which is friendly but too vague. A skilled content strategist finds the precise middle ground, crafting phrases like "Scanning for liability risks" or "Comparing local vendor prices to secure your Friday delivery." These messages communicate the AI’s action and its intended outcome, building trust and understanding.

Finally, continuous qualitative testing is indispensable. Even subtle variations in wording can significantly impact user perception. A/B testing status messages—for instance, comparing "Verifying identity" with "Checking government databases"—can reveal which phrases build trust and which trigger anxiety. This iterative testing, ideally involving the entire cross-functional team, ensures that the final interface effectively serves both the system’s logic and the user’s peace of mind.

Broader Implications: Trust, Adoption, and the Future of AI

The commitment to strategic transparency through methodologies like the Decision Node Audit extends far beyond mere UX improvement; it fundamentally shapes user adoption, brand reputation, and competitive advantage in the burgeoning AI landscape. Companies that master transparent AI design will foster deeper trust, leading to higher user engagement and loyalty. This proactive approach also positions organizations favorably for evolving regulatory landscapes, such as the EU AI Act, which increasingly emphasize explainability and user control.

According to a recent IBM study, 80% of consumers believe that AI should be transparent about how it works. This demand is not just about ethical considerations but also about practical utility. When users understand an AI’s process, they are better equipped to provide feedback, correct errors, and leverage the system more effectively. This collaborative human-AI interaction paradigm is the future of intelligent systems, moving from a master-slave relationship to one of informed partnership. UX designers, in this context, are not just crafting interfaces; they are shaping the very perception of AI, acting as crucial intermediaries between complex technology and human understanding.

Conclusion

In the age of agentic AI, trust is not an emotional byproduct; it is a deliberate design choice, a mechanical result of predictable and timely communication. The "black box" and "data dump" approaches are no longer viable. By systematically applying tools like the Decision Node Audit and the Impact/Risk Matrix, teams can precisely identify moments where the AI makes a judgment call, open the box, and strategically show its work. This integrated, cross-functional approach ensures that transparency becomes an inherent feature of AI systems, empowering users, mitigating anxiety, and ultimately accelerating the ethical and effective adoption of these transformative technologies. The journey toward truly trustworthy AI is ongoing, and the next steps involve refining how these transparency moments are designed, written, and gracefully handle the inevitable instances when the agent gets it wrong.