NodeLLM 1.16: Advanced Tool Orchestration and Multimodal Manipulation

Background: The Imperative for Production-Grade AI Infrastructure

In the rapidly accelerating landscape of artificial intelligence, the demand for reliable, scalable, and adaptable infrastructure has never been more critical. As enterprises increasingly integrate large language models (LLMs) and multimodal AI into core operations, the need for robust development frameworks becomes paramount. NodeLLM, a prominent library in the JavaScript/TypeScript ecosystem, has emerged as a key player in this space, aiming to abstract away the complexities of interacting with diverse AI providers and models. Its mission is to empower developers to build sophisticated AI applications with greater efficiency and fewer headaches.

The journey from initial AI concept to production deployment is fraught with challenges. Developers must contend with varying API standards across different LLM providers (e.g., OpenAI, Anthropic, Google Gemini, AWS Bedrock, Mistral), manage diverse data types (text, images, audio), ensure data security, optimize performance, and, crucially, handle unexpected model behaviors or external system failures. Earlier versions of NodeLLM concentrated on simplifying initial integration, providing a unified interface for basic LLM interactions. However, as AI applications mature, particularly those involving multi-step "agentic workflows" where AI acts autonomously to achieve goals, the requirements for precision, reliability, and error recovery become far more stringent. NodeLLM 1.16 directly confronts these advanced challenges, offering a suite of features designed to instill confidence in deploying AI solutions at scale.

A Chronology of NodeLLM’s Evolution Towards Precision

NodeLLM’s development trajectory reflects the broader industry’s progression. Early releases (prior to v1.15) focused on fundamental interoperability, allowing developers to connect to various LLMs with minimal setup. Version 1.15 introduced crucial "Self-Correction middleware," a significant step towards building more resilient AI agents by enabling models to recognize and potentially rectify their own errors.

The release of 1.16 builds directly on this foundation, pushing the boundaries further. It represents a maturation point where the framework moves beyond mere connectivity to offering fine-grained control over AI model execution and expanding its support for diverse data modalities. This progression underscores a commitment to addressing the practical demands of building sophisticated AI systems that can operate reliably in dynamic, real-world environments. The timing of this release aligns with a period of intense innovation in multimodal AI and autonomous agents, positioning NodeLLM as a timely and relevant tool for developers pushing the envelope.

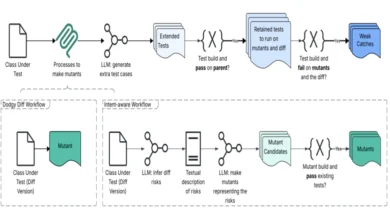

Advanced Image Manipulation: Beyond Basic Generation

One of the most significant advancements in NodeLLM 1.16 is its high-fidelity image editing and manipulation support, transcending the capabilities of simple text-to-image generation. This update ushers in robust features for In-painting, Masking, and Variations, providing developers with "surgical image edits" previously requiring specialized graphical software or complex custom integrations.

The paint() method now allows developers to pass source images and masks, enabling highly targeted modifications. For instance, when utilizing OpenAI providers, NodeLLM intelligently routes these requests to the dedicated /v1/images/edits endpoint, leveraging the specialized gpt-image-1 (DALL-E 2) model. DALL-E 2, despite newer generative models emerging, retains its status as a state-of-the-art solution for precise image manipulation tasks, known for its ability to understand context and make coherent alterations.

This capability unlocks a myriad of applications across various industries. In e-commerce, businesses can rapidly customize product images, swapping backgrounds or modifying specific product features for different marketing campaigns without needing extensive manual graphic design. For creative agencies, it means faster iteration on visual concepts, allowing AI to assist in refining brand logos, generating variations of key visual assets, or performing complex scene edits. Game developers can leverage this for dynamic asset generation, modifying character elements or environmental textures on the fly. The underlying technical sophistication of handling source images, masks, and prompts through a unified API simplifies what would otherwise be a cumbersome multi-step process involving image processing libraries and direct API calls to specialized endpoints.

Furthermore, NodeLLM 1.16 streamlines the generation of Image Variations & Asset Support. Developers can now create multiple visual variations of a source image without explicit prompts, facilitating rapid content generation and exploration. The framework also seamlessly handles diverse asset inputs, accepting base64 encoded images or direct URLs. This is made possible by the robust BinaryUtils layer, which intelligently manages the conversion of these assets into provider-standard multipart formats. This abstraction liberates developers from the intricate details of binary boundaries, MIME types, and API-specific encoding requirements, significantly improving developer experience and reducing potential integration errors.

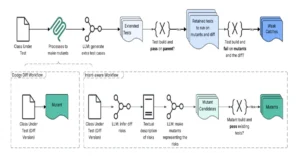

Precision Tool Orchestration: Mastering Agentic Workflows

The proliferation of "agentic workflows"—where AI models are empowered to perform complex, multi-step tasks by interacting with external tools and systems—has highlighted a critical need for precise control over tool usage. NodeLLM 1.16 introduces the choice and calls directives, fundamentally enhancing the framework’s ability to orchestrate these sophisticated interactions.

The Tool Choice directive provides unparalleled control over how models interact with available tools. Developers can now mandate that an LLM use a specific tool or even prevent tool usage altogether, mirroring OpenAI’s tool_choice parameter but crucially normalizing this functionality across all major providers, including Anthropic, Gemini, Bedrock, and Mistral. This cross-provider consistency is a game-changer for developers seeking to build portable and future-proof AI applications, eliminating the need to write provider-specific logic for tool handling. For instance, in an application where a model must retrieve real-time data before responding, choice: 'get_data_tool' ensures that the model prioritizes that action, reducing the likelihood of speculative or incorrect responses.

Equally vital is the introduction of Sequential Execution via calls: 'one'. Modern, highly capable LLMs often exhibit a tendency to attempt multiple tool calls in parallel when presented with complex requests. While this can be efficient, it frequently leads to "parallel hallucinations" or logical inconsistencies, especially when the outcome of a later tool call is dependent on the results of an earlier one. Consider an agent asked to "Find the weather in London, then book a flight if it’s sunny." If the model attempts to check the weather and book a flight simultaneously, it might try to book a flight before knowing the weather, leading to erroneous actions. By setting calls: 'one', developers can force the model to proceed sequentially, executing one tool call at a time and waiting for its output before deciding on the next action. This dramatically enhances the reliability and predictability of agentic workflows, particularly in sensitive applications such as financial transactions, complex data processing pipelines, or critical decision-making systems where accuracy and order of operations are paramount.

AI Self-Correction for Tool Failures: Enhancing Resilience

Building upon the robust Self-Correction middleware introduced in v1.15, NodeLLM 1.16 significantly hardens the tool execution pipeline, moving AI systems closer to a state of autonomous self-healing. This enhancement addresses a common failure point in agentic systems: models attempting to use non-existent tools or providing invalid arguments to legitimate ones.

Previously, such errors might have resulted in application-level exceptions, crashing the agent or requiring manual intervention. NodeLLM 1.16 now intelligently intercepts these failures. If a model attempts to call a tool that isn’t defined, the framework catches the error and provides a descriptive "unavailable tool" response, along with a list of valid tools. This immediate, contextual feedback allows the AI model to "instantly self-correct its proposal" within the same conversational turn, without external developer intervention.

Similarly, if a model attempts to call a tool with arguments that fail validation—for example, providing a string where a number is expected, or missing a required parameter—NodeLLM, leveraging robust validation libraries like Zod, feeds back an "Invalid Arguments" result. This precise error message enables the agent to understand what went wrong and how to fix its input, allowing it to "fix its own mistakes." This closed-loop feedback mechanism is transformative for agent robustness. It reduces debugging cycles, improves the overall stability of AI applications, and moves the paradigm from "fail fast" to "recover gracefully," a hallmark of production-grade software. This intelligent error handling is critical for complex, long-running agentic processes where human oversight is impractical.

Advanced Transcription & Diarization: Deeper Audio Intelligence

The audio processing capabilities within NodeLLM have also received a substantial upgrade, significantly enhancing its utility for applications requiring sophisticated voice intelligence. The Transcription interface now supports Word-level Timestamps and enriched Diarization (speaker tracking).

Word-level timestamps provide precise timing information for every word spoken in an audio recording. This granular detail is invaluable for a wide array of applications:

- Media Production: Editors can quickly locate specific spoken phrases in lengthy audio or video files, streamlining post-production workflows.

- Accessibility: Generating synchronized captions for videos becomes more accurate and automated, improving accessibility for hearing-impaired users.

- Legal & Compliance: In legal transcription, the ability to pinpoint exact timings of spoken words can be crucial for evidence review and verification.

- Education: Interactive learning platforms can use word-level timestamps to highlight text as it’s spoken, aiding language learning or lecture review.

Enhanced Diarization takes audio analysis a step further by accurately identifying and tracking individual speakers within a conversation. This feature is particularly impactful for:

- Meeting Summarization: Automatically generating summaries that attribute specific statements to individual participants, greatly improving the utility of meeting notes.

- Call Center Analytics: Analyzing customer service calls to understand interactions between agents and customers, identifying key speakers and their contributions.

- Multi-Speaker Interviews: Transcribing interviews with multiple participants, providing a clear record of who said what, without requiring manual annotation.

- Voice Biometrics & Security: While not explicitly stated, improved diarization lays groundwork for more advanced speaker identification and verification systems.

These audio enhancements leverage sophisticated speech recognition and machine learning models, offering a level of detail and accuracy previously only available through highly specialized, often expensive, services. By integrating these capabilities directly into NodeLLM, developers gain access to powerful audio intelligence tools within a unified framework, simplifying the development of multimodal applications that seamlessly blend text, image, and audio processing.

Broader Impact and Implications for the AI Ecosystem

NodeLLM 1.16 represents more than just a collection of new features; it signifies a maturing ecosystem for AI development. By focusing on "surgical control" and "multimodal parity," NodeLLM addresses critical pain points for developers transitioning AI prototypes into production-grade systems.

- For Developers: The framework significantly reduces the boilerplate and complexity associated with integrating diverse AI models and modalities. Features like cross-provider tool normalization and intelligent error handling free developers to focus on application logic rather than low-level API intricacies and debugging unpredictable model behaviors. This leads to faster development cycles, more robust applications, and a lower barrier to entry for building sophisticated AI agents.

- For Businesses: This release empowers organizations to deploy more reliable, precise, and sophisticated AI solutions. Industries ranging from creative arts and e-commerce to customer service and legal tech can leverage these capabilities to unlock new use cases, automate complex processes, and enhance user experiences. The emphasis on self-correction and controlled execution directly translates to increased operational stability and reduced risk in AI-driven operations.

- For the AI Ecosystem: NodeLLM’s continued evolution contributes to the professionalization of AI development tools. As more frameworks offer such granular control and multimodal capabilities, it drives innovation across the board, pushing the boundaries of what AI agents can reliably achieve. It also underscores the growing importance of abstraction layers that simplify the rapidly diversifying landscape of AI models and providers.

A spokesperson for NodeLLM, likely reflecting the insights of lead developers like Shaiju Edakulangara, would emphasize that "this release is a testament to our commitment to providing developers with the tools they need to build truly resilient and intelligent AI applications. We believe that surgical control over model behavior and seamless multimodal capabilities are no longer luxuries but necessities for anyone serious about deploying AI in the real world." Early reactions from the developer community are anticipated to highlight the immediate practical benefits, particularly the improved reliability of agentic workflows and the streamlined handling of image and audio data. Experts in the field are likely to laud NodeLLM 1.16 as a significant step towards democratizing access to advanced AI capabilities, making them more manageable and predictable for a wider range of developers and enterprises.

Getting Started and Future Outlook

For developers eager to leverage these new capabilities, NodeLLM 1.16.0 is readily available via npm: npm install @node-llm/[email protected]. This "Big Release" consolidates numerous architectural refinements and bug fixes, further solidifying the framework’s foundation. The complete list of changes and detailed information can be found in the official Commit History and CHANGELOG, providing full transparency and documentation for the update.

NodeLLM 1.16 is more than just an update; it’s a strategic enhancement that positions the framework as a crucial component for building the next generation of intelligent, reliable, and adaptable AI applications. By prioritizing precision control and multimodal integration, NodeLLM continues to pave the way for developers to confidently deploy AI systems that can operate effectively in the complex, dynamic environments of modern production applications.