The speechSynthesis API: A Powerful, Underutilized Tool for Enhancing Web Accessibility and User Experience

As the digital landscape continues its inexorable expansion, serving as the primary medium for information, commerce, and social interaction for billions worldwide, the imperative for web standards bodies to continually innovate and provide new Application Programming Interfaces (APIs) has never been more critical. These advancements are not merely about adding novel functionalities; they are fundamentally about enriching user experience and, crucially, fostering greater accessibility. Among the array of powerful web APIs, one often overlooked yet profoundly impactful tool for visually impaired users is speechSynthesis. This API offers developers the ability to programmatically direct a browser to audibly articulate any arbitrary string of text, transforming static content into dynamic spoken word. Its potential to augment existing accessibility tools and create more inclusive web environments warrants a deeper examination of its capabilities, applications, and implications for the future of web development.

The journey towards a universally accessible web has been a protracted yet steadfast one, driven by both technological innovation and a growing societal awareness of digital inclusion. Early efforts, dating back to the mid-1990s, recognized the inherent barriers the web posed for individuals with disabilities. This led to the formation of initiatives like the Web Accessibility Initiative (WAI) by the World Wide Web Consortium (W3C) in 1997, which subsequently developed the Web Content Accessibility Guidelines (WCAG). These guidelines, now in their latest iteration (WCAG 2.2), provide a comprehensive framework for making web content more accessible to people with a wide range of disabilities, including visual, auditory, physical, speech, cognitive, language, learning, and neurological disabilities. Concurrently, legislative frameworks such as Section 508 of the Rehabilitation Act in the United States and the European Accessibility Act have mandated accessibility standards, pushing developers and organizations to integrate inclusive design principles.

Within this evolving context, assistive technologies have played a pivotal role. Screen readers, such as JAWS, NVDA, and Apple’s VoiceOver, have become indispensable tools for blind and low-vision users, interpreting visual elements and presenting them as speech or braille output. However, while these native tools are robust, they operate on a broad interpretative layer. The speechSynthesis API introduces a more granular, developer-controlled layer of spoken feedback, offering the ability to provide specific, contextual audio cues that might otherwise be difficult for a generic screen reader to convey with the same precision. It represents a significant step towards a web where developers can directly enhance auditory feedback, complementing, rather than replacing, established assistive technologies.

Diving into the speechSynthesis API: Technical Foundations

The core functionality of the speechSynthesis API is surprisingly straightforward, yet its underlying mechanisms are robust. At its heart, the API allows web applications to convert text into spoken audio directly within the browser, utilizing the operating system’s built-in text-to-speech engines. The primary entry point for this functionality is the window.speechSynthesis object, which provides an interface to control the speech service.

To initiate speech, a developer creates an instance of SpeechSynthesisUtterance, which acts as a container for the text to be spoken and various parameters that control the speech’s characteristics. The most basic implementation, as highlighted in developer documentation, involves a minimal code snippet:

window.speechSynthesis.speak(

new SpeechSynthesisUtterance('Hey Jude!')

);This simple line of code triggers the browser to vocalize the phrase "Hey Jude!" using its default voice settings. This immediate feedback mechanism demonstrates the API’s power in providing instant auditory responses. The speechSynthesis.speak() method queues the SpeechSynthesisUtterance object to be spoken. If another utterance is already being spoken, the new one will be queued and spoken after the current one completes.

Beyond this fundamental operation, the SpeechSynthesisUtterance object exposes a range of properties that allow developers to fine-tune the auditory output, offering a level of control that can significantly enhance the user experience. These properties include:

text: The string of text that the speech service will utter.lang: Specifies the language of the speech. This is crucial for correct pronunciation and intonation, as different languages have distinct phonologies. For example, settinglang = 'es-ES'would ensure Spanish pronunciation.voice: Allows developers to select a specific voice from the list of available voices on the user’s system. ThespeechSynthesis.getVoices()method can retrieve an array ofSpeechSynthesisVoiceobjects, each representing an available voice. This enables customization to match brand identity or user preference.pitch: Controls the pitch of the voice, ranging from 0 (lowest) to 2 (highest), with 1 being the default. Adjusting pitch can help differentiate between various types of spoken content or emphasize certain words.rate: Sets the speed at which the utterance is spoken, with values typically ranging from 0.1 (slowest) to 10 (fastest), where 1 is the default. This is particularly useful for users who might prefer a slower pace for comprehension or a faster pace for reviewing familiar content.volume: Dictates the volume of the speech, ranging from 0 (silent) to 1 (maximum), with 1 being the default. This allows developers to integrate speech harmoniously with other audio elements on the page.

Moreover, the speechSynthesis API includes event handlers that enable developers to react to the lifecycle of an utterance. These events include onstart (when the speech begins), onend (when it finishes), onerror (if an error occurs), onpause, and onresume. These handlers facilitate more sophisticated interactions, such as displaying a visual indicator when speech is active or logging errors for debugging.

The broad support for the speechSynthesis API across all modern browsers—including Chrome, Firefox, Safari, Edge, and Opera—underscores its stability and readiness for widespread implementation. This ubiquitous compatibility means developers can confidently integrate this feature without concerns about fragmented user experiences across different browser environments.

Transformative Applications and Use Cases

While the speechSynthesis API is not intended as a wholesale replacement for dedicated native screen readers, its strategic application can profoundly enhance web accessibility and user engagement across a multitude of scenarios. Its strength lies in providing contextual, on-demand, and highly specific audio feedback that can complement the broader information delivery of a screen reader.

-

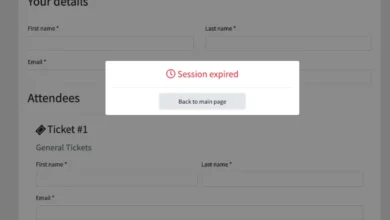

Contextual Accessibility for Visually Impaired Users: Beyond merely reading out page content,

speechSynthesiscan be employed to provide critical, real-time feedback for dynamic elements. For instance, in complex web forms, it can audibly announce validation errors as they occur (e.g., "Error: Email format invalid"), guiding the user without requiring them to navigate back through the form or rely solely on visual cues. Similarly, for single-page applications (SPAs) where content updates asynchronously, the API can announce changes to critical regions, such as "New notification received" or "Search results updated." This proactive feedback mitigates the risk of users missing crucial information due to dynamic content shifts that screen readers might not immediately detect or announce. -

Interactive Tutorials and Onboarding: For new users or complex interfaces,

speechSynthesiscan power interactive audio tutorials. A website could guide a user through its features step-by-step, explaining elements as they are highlighted or interacted with. This "audio coach" approach can significantly reduce cognitive load and improve the onboarding experience, especially for users who prefer auditory learning or have difficulty processing visual information rapidly. -

Language Learning and Pronunciation Guides: Educational platforms can leverage

speechSynthesisto provide accurate pronunciation for words and phrases in various languages. By simply clicking on a word, users could hear its correct articulation, making it an invaluable tool for language learners. The ability to switch voices and languages dynamically further enhances its utility in this domain. -

Gaming and Immersive Experiences: In web-based games or interactive narratives, the API can deliver character dialogue, environmental cues, or gameplay instructions, adding an auditory dimension that enriches immersion. For instance, a text-based adventure game could use

speechSynthesisto narrate events, making the experience more engaging and accessible to users who might struggle with reading large blocks of text. -

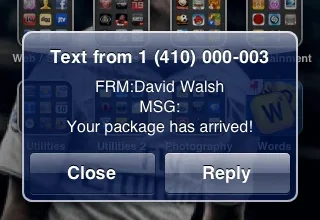

Notifications and Alerts: Beyond critical errors,

speechSynthesiscan provide non-intrusive auditory alerts for less urgent notifications. Imagine a productivity tool announcing "Task completed" or a news aggregator reading out a headline, allowing users to stay informed without constantly monitoring the screen. The customizability of voice, pitch, and volume ensures these alerts can be integrated subtly into the user’s workflow.

-

Accessibility Overlays and Widgets: Developers building custom accessibility tools or overlays for their websites can integrate

speechSynthesisto power features like "Read Aloud" buttons for selected text, or to provide audio descriptions for images and complex data visualizations when a user hovers over them. This empowers users to customize their consumption of web content based on their individual needs.

Complementing Native Assistive Technologies: A Symbiotic Relationship

It is imperative to reiterate that the speechSynthesis API is not designed to replace native screen readers but rather to complement them. Screen readers are comprehensive tools built to interpret the entire Document Object Model (DOM) and provide a holistic representation of a web page’s structure and content. They handle navigation, focus management, and provide access to a wide array of interactive elements.

However, native screen readers can sometimes be overwhelming, particularly on complex pages with dynamic content. They might announce every element in a linear fashion, potentially obscuring the most critical information or making it difficult for users to grasp the immediate context of an interaction. This is where speechSynthesis shines. By allowing developers to programmatically control what is spoken and when, it can provide precise, targeted feedback.

For example, when a user submits a form and an error message appears in a specific, non-standard location, a screen reader might eventually announce it, but a developer using speechSynthesis could immediately vocalize "Please correct the highlighted fields" as soon as the error state is detected. This directness can significantly improve efficiency and reduce frustration for users relying on auditory feedback. Accessibility advocates often emphasize the principle of "user choice and control," and speechSynthesis offers developers a powerful mechanism to empower users with more tailored auditory experiences. Organizations like the W3C consistently advocate for a multi-faceted approach to accessibility, where browser APIs work in concert with native technologies and good semantic HTML practices.

Challenges and Thoughtful Implementation

Despite its immense potential, developers must approach the implementation of speechSynthesis with careful consideration to avoid creating a detrimental user experience.

- Avoiding Auditory Overload: The most significant pitfall is overuse. A website that constantly speaks could quickly become irritating and counterproductive, especially for users who are already using a screen reader or prefer visual information. Developers must ensure speech is purposeful, concise, and optional.

- Voice Quality and Naturalness: While browser-native voices have improved significantly, they can still sound robotic compared to advanced cloud-based Text-to-Speech (TTS) services powered by artificial intelligence. Developers should test different voices and consider user preferences, perhaps offering a choice of voices where appropriate.

- Performance and Resource Management: While synthesizing short utterances is generally lightweight, synthesizing very long texts or numerous utterances in quick succession could potentially consume more resources. Developers should optimize their usage and consider buffering or canceling previous utterances if new, more critical information needs to be spoken.

- User Preferences and Control: Crucially, users must have control over the speech output. This includes mechanisms to pause, resume, stop, or mute the speech. A dedicated toggle or settings panel for speech preferences is essential to respect user autonomy.

- Internationalization (i18n) and Localization (l10n): For global applications, ensuring the correct language and accent is crucial. The

langproperty is vital, and developers should ensure their applications dynamically select appropriate voices based on the user’s preferred language settings. - Redundancy with Native Screen Readers: Developers must ensure that the information conveyed via

speechSynthesisis not only available through this API. All critical information must still be accessible through standard semantic HTML and ARIA attributes, so it can be interpreted correctly by native screen readers. ThespeechSynthesisAPI should enhance, not replace, fundamental accessibility practices.

Broader Implications and the Future of Inclusive Web Design

The ongoing development and wider adoption of APIs like speechSynthesis signal a clear trajectory towards a more inclusive and adaptable web. The commitment from browser vendors and standards bodies to provide such tools reflects a growing understanding that accessibility is not a niche concern but a fundamental aspect of good web design. By empowering developers with direct control over auditory feedback, these APIs facilitate the creation of web experiences that can cater to a broader spectrum of human abilities and preferences.

The implications extend beyond mere compliance with accessibility guidelines. A more accessible web translates into a larger user base, enhanced user satisfaction, and potentially significant economic benefits for businesses. As AI and machine learning continue to advance, we can anticipate future iterations of text-to-speech technology that offer even more natural-sounding voices, emotional inflection, and tighter integration with contextual understanding, further blurring the lines between human and synthesized speech.

In conclusion, the speechSynthesis API stands as a powerful, yet often underutilized, instrument in the web developer’s toolkit for crafting genuinely accessible and engaging digital experiences. Its ability to provide precise, developer-controlled auditory feedback offers a valuable complement to existing native assistive technologies, opening new avenues for interactive learning, contextual guidance, and immersive content delivery. The responsibility now lies with the developer community to embrace this API, understand its nuances, and implement it thoughtfully and ethically, ensuring that the web continues to evolve as an inclusive space for all users. By doing so, we move closer to the vision of a truly universal web, where technology adapts to human needs, rather than the other way around.