AWS Bedrock Welcomes Anthropic’s Claude Opus 4.7, Ushering in New Era of Advanced AI Capabilities for Developers

Amazon Web Services (AWS) has announced the integration of Anthropic’s latest and most advanced large language model, Claude Opus 4.7, into Amazon Bedrock. This significant update promises to enhance performance across a range of complex tasks, including sophisticated coding, long-running agentic workflows, and demanding professional applications. The availability of Claude Opus 4.7 on Bedrock signifies a crucial step forward in providing developers and enterprises with cutting-edge AI tools designed for production-grade workloads.

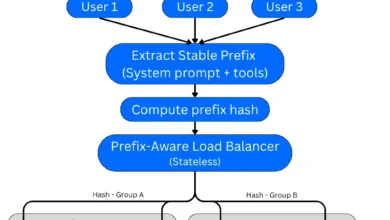

The integration leverages Bedrock’s next-generation inference engine, which has been meticulously engineered to deliver robust, enterprise-grade infrastructure. This new engine incorporates advanced scheduling and scaling logic, enabling dynamic allocation of computing capacity to incoming requests. This optimization is particularly beneficial for maintaining high availability for steady-state workloads while simultaneously accommodating services that experience rapid scaling demands. A core tenet of this integration is the assurance of zero operator access, meaning that customer prompts and responses remain confidential and are never visible to Anthropic or AWS operators, thereby safeguarding sensitive data.

Anthropic has highlighted that Claude Opus 4.7 represents a notable improvement over its predecessor, Opus 4.6, offering enhanced capabilities in agentic coding, knowledge work, visual understanding, and the execution of long-running tasks. The model demonstrates a heightened ability to navigate ambiguity, a more thorough approach to problem-solving, and a more precise adherence to user instructions. This evolution is expected to empower developers to build more intelligent and responsive AI-driven applications.

Background and Development Context

The release of Claude Opus 4.7 on Amazon Bedrock follows a period of intense development and refinement in the field of artificial intelligence, particularly in the domain of large language models (LLMs). Anthropic, a leading AI safety and research company, has been at the forefront of developing AI systems that are not only powerful but also aligned with human values. The partnership with AWS, a dominant cloud computing provider, aims to democratize access to these advanced AI models, making them readily available to a broad spectrum of businesses and developers.

Amazon Bedrock itself was launched with the objective of simplifying the process of building and scaling generative AI applications. By offering a choice of leading foundation models from various AI companies through a single API, Bedrock allows developers to experiment, customize, and deploy AI models without the complexities of managing underlying infrastructure. The addition of Anthropic’s most capable model to this platform underscores AWS’s commitment to providing a comprehensive and versatile AI development environment.

Enhanced Performance and Capabilities

According to Anthropic’s internal assessments and preliminary user feedback, Claude Opus 4.7 exhibits marked improvements across several key performance indicators. Its enhanced reasoning abilities allow it to tackle more complex problems with greater accuracy. The model’s proficiency in understanding and generating code is particularly noteworthy, potentially accelerating software development cycles and enabling the creation of more sophisticated applications.

For long-running agentic workflows, which often involve multiple steps and interactions, Claude Opus 4.7’s improved context management and task execution capabilities are expected to yield more reliable and efficient outcomes. This is critical for applications such as customer service bots that handle extended conversations, automated research assistants, or complex process orchestration tools.

The model’s enhanced visual understanding capabilities suggest a greater capacity to interpret and respond to prompts that involve image analysis or multimodal inputs, opening up new avenues for AI applications in areas like content moderation, data analysis from visual sources, and enhanced accessibility tools.

Technical Integration and Developer Experience

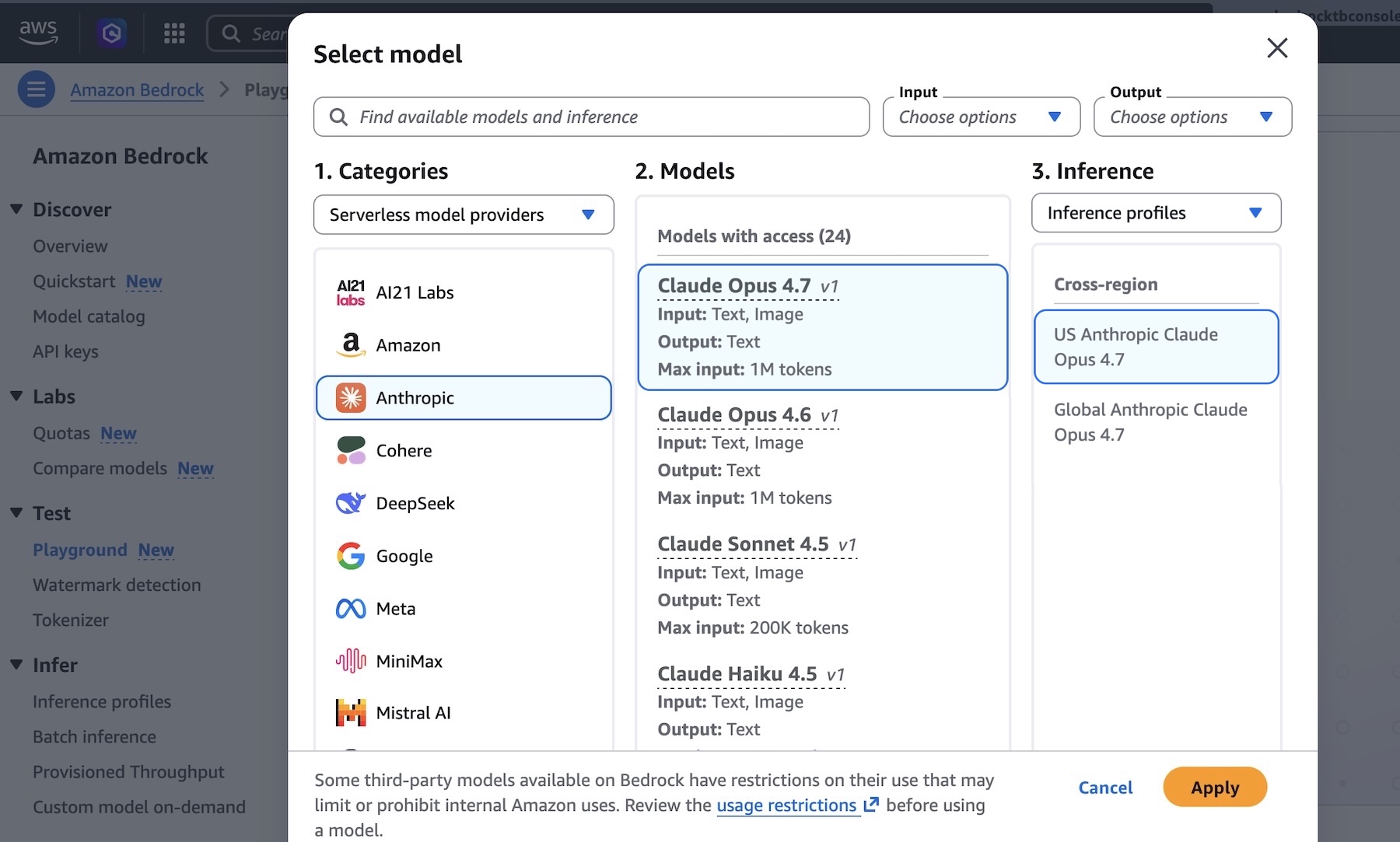

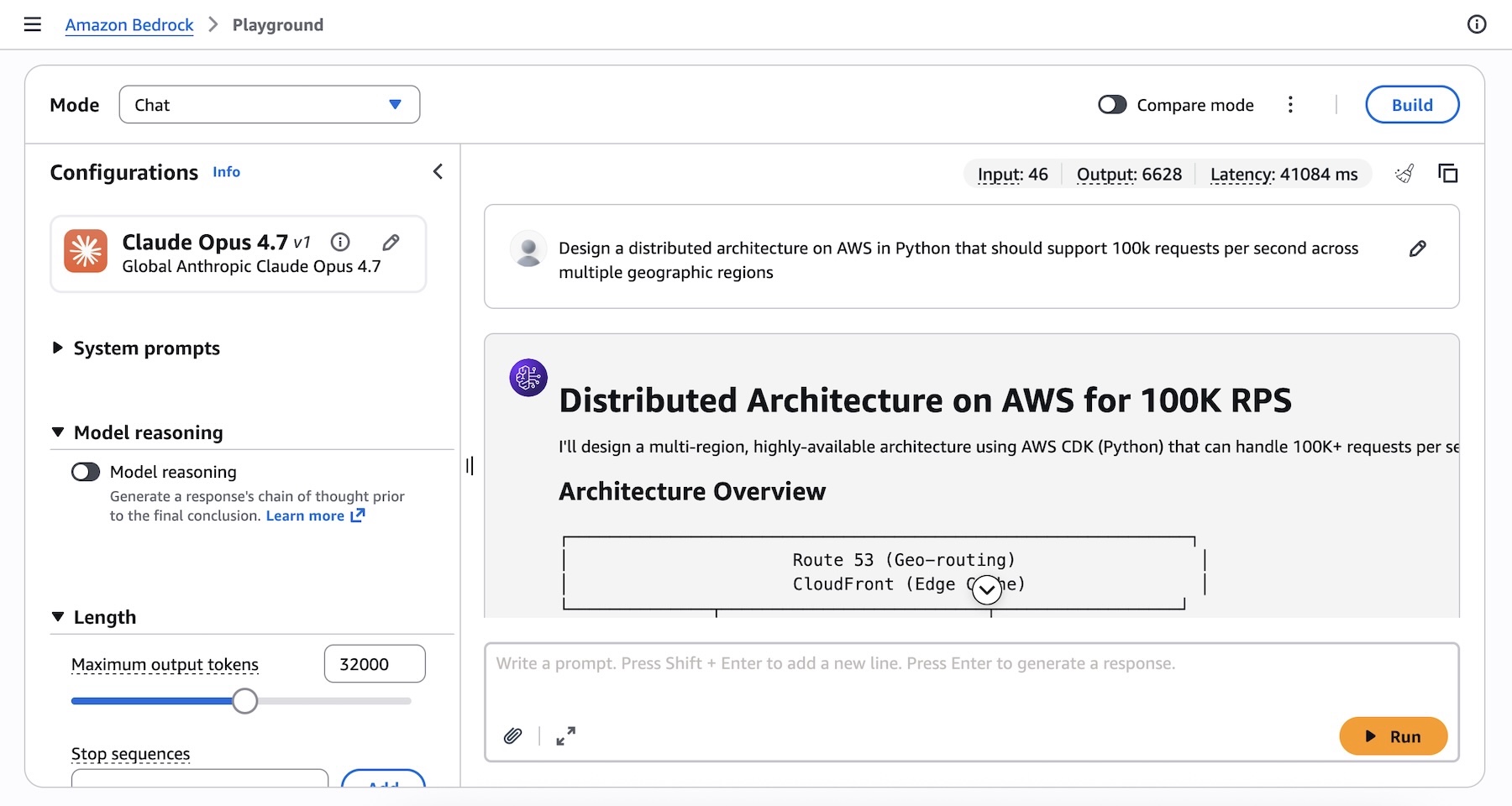

The integration of Claude Opus 4.7 into Amazon Bedrock is designed to provide a seamless experience for developers. Users can access the model directly through the Amazon Bedrock console. By navigating to the "Playground" section under the "Test" menu and selecting "Claude Opus 4.7," developers can immediately begin experimenting with the model’s capabilities. This console interface allows for direct prompt testing and iteration, facilitating rapid prototyping and exploration.

For programmatic access, Claude Opus 4.7 can be invoked via the Anthropic Messages API, utilizing the bedrock-runtime service. This can be done through the Anthropic SDK or the bedrock-mantle endpoints. Alternatively, developers can continue to use the Invoke and Converse API calls on bedrock-runtime through the AWS Command Line Interface (AWS CLI) and AWS SDKs. This multi-faceted approach ensures flexibility for developers regardless of their preferred development environment or workflow.

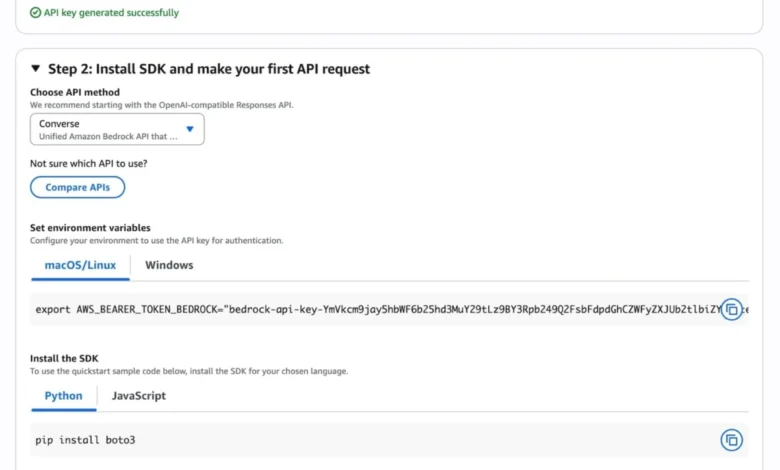

To facilitate rapid adoption, the Amazon Bedrock console offers a "Quickstart" guide. This feature helps users select their use case and generate short-term API keys for authentication, simplifying the initial steps of making an API call. Sample code snippets are also provided for various use cases and programming languages, further streamlining the integration process.

For developers using the Anthropic Claude Messages API, a Python code example illustrates the ease of use:

from anthropic import AnthropicBedrockMantle

# Initialize the Bedrock Mantle client (uses SigV4 auth automatically)

mantle_client = AnthropicBedrockMantle(aws_region="us-east-1")

# Create a message using the Messages API

message = mantle_client.messages.create(

model="us.anthropic.claude-opus-4-7",

max_tokens=32000,

messages=[

"role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions"

]

)

print(message.content[0].text)Similarly, for those preferring the AWS CLI, an example command demonstrates how to invoke the model directly:

aws bedrock-runtime invoke-model

--model-id us.anthropic.claude-opus-4-7

--region us-east-1

--body '"anthropic_version":"bedrock-2023-05-31", "messages": ["role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions."], "max_tokens": 32000'

--cli-binary-format raw-in-base64-out

invoke-model-output.txtThese examples, along with updated code samples and CLI commands reflecting the latest version, are available to ensure developers can quickly and accurately integrate Claude Opus 4.7 into their applications.

Adaptive Thinking for Enhanced Reasoning

A key feature highlighted for Claude Opus 4.7 is its "Adaptive thinking" capability. This advanced functionality allows the model to dynamically allocate its thinking token budget based on the complexity of each individual request. This means that for simpler queries, the model can respond more efficiently, while for more intricate problems, it can dedicate more computational resources to achieve a higher level of reasoning and accuracy. This adaptive approach is crucial for optimizing performance and cost-effectiveness in real-world applications where workload complexity can vary significantly.

Availability and Regional Deployment

Anthropic’s Claude Opus 4.7 model is now available on Amazon Bedrock in several key AWS regions, including US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). AWS is continually expanding its regional presence, and users are encouraged to consult the official AWS documentation for the most up-to-date list of supported regions and potential future rollouts.

Broader Implications and Future Outlook

The integration of Claude Opus 4.7 into Amazon Bedrock represents a significant milestone in the advancement and accessibility of cutting-edge AI. By offering such a powerful and versatile model through a managed service, AWS is lowering the barrier to entry for businesses looking to leverage generative AI for innovation and competitive advantage.

The enhanced capabilities in coding, long-running tasks, and complex reasoning are expected to drive new applications across various industries. This could include more sophisticated financial modeling tools, advanced medical research assistants, highly personalized educational platforms, and more intuitive creative content generation tools.

The emphasis on privacy and security, with the "zero operator access" policy, is particularly important for enterprise adoption, where data confidentiality is paramount. As AI continues to evolve, the ability to deploy these powerful models with strong privacy guarantees will be a critical factor in their widespread acceptance and integration into core business operations.

The continued collaboration between AWS and Anthropic, exemplified by this integration, suggests a future where leading AI research is rapidly translated into practical, scalable solutions accessible to a global developer community. This ongoing partnership is likely to yield further advancements, pushing the boundaries of what is possible with artificial intelligence and cloud computing.

Developers are encouraged to explore Claude Opus 4.7 within the Amazon Bedrock console and provide feedback through AWS re:Post for Amazon Bedrock or their usual AWS Support channels. This feedback loop is vital for the continuous improvement of these advanced AI services. The pricing details for Claude Opus 4.7 on Amazon Bedrock can be found on the official Amazon Bedrock pricing page, allowing businesses to plan and budget for their AI initiatives effectively.